Explorers into unknown territory face plenty of risks. One that doesn’t always get the attention it deserves is the possibility that they know less about the country ahead than they think. Inaccurate maps, jumbled records, travelers’ tales that got garbled in transmission or were made up in the first place: all these and more have laid their share of traps at the feet of adventurers on their way to new places and accounted for an abundance of disasters. As we make our way willy-nilly into that undiscovered country called the future, a similar rule applies.

Christopher Columbus, when he set sail on the first of his voyages across the Atlantic, brought with him a copy of The Travels of Sir John Mandeville, a fraudulent medieval travelogue that claimed to recount a journey east to the Earthly Paradise across a wholly imaginary Asia, packed full of places and peoples that never existed. Columbus’ eager attention to that volume seems to have played a significant role in keeping him hopelessly confused about the difference between the Asia of his dreams and the place where he’d actually arrived. It’s a story more than usually relevant today, because most people nowadays are equipped with comparable misinformation for their journey into the future, and are going to end up just as disoriented as Columbus.

I’ve written at some length already about some of the stage properties with which the Sir John Mandevilles of science fiction and the mass media have stocked the Earthly Paradises of their technofetishistic dreams: flying cars, space colonies, nuclear reactors that really, truly will churn out electricity too cheap to meter, and the rest of it. (It occurs to me that we could rework a term coined by the late Alvin Toffler and refer to the whole gaudy mess as Future Schlock.) Yet there’s another delusion, subtler but even more misleading, that pervades current notions about the future and promises an even more awkward collision with unwelcome realities.

That delusion? The notion that we can decide what future we’re going to get, and get it.

It’s hard to think of a belief more thoroughly hardwired into the collective imagination of our time. Politicians and pundits are always confidently predicting this or that future, while think tanks earnestly churn out reports on how to get to one future or how to avoid another. It’s not just Klaus Schwab and his well-paid flunkeys at the World Economic Forum, chattering away about their Orwellian plans for a Great Reset; with embarrassingly few exceptions, from the far left to the far right, everyone’s got a plan for the future, and acts as though all we have to do is adopt the plan and work hard, and everything will fall into place.

What’s missing in this picture is any willingness to compare that rhetoric to reality and see how well it performs. Over the last century or so we’ve had plenty of grand plans that set out to define the future, you know. We’ve had a War on Poverty, a War on Drugs, a War on Cancer, and a War on Terror, just for starters—how are those working out for you? War was outlawed by the Kellogg-Briand Pact in 1928, the United States committed itself to provide a good job for every American in the Full Employment and Balanced Growth Act of 1978, and of course we all know that Obamacare was going to lower health insurance prices and guarantee that you could keep your existing plan and physician. Here again, how did those work out for you?

This isn’t simply an exercise in sarcasm, though I freely admit that political antics of the kind just surveyed have earned their share of ridicule. The managerial aristocracy that came to power in the early twentieth century across the industrial world defined its historical mission as taking charge of humanity’s future through science and reason. Rational planning carried out by experts guided by the latest research, once it replaced the do-it-yourself version of social change that had applied before that point, was expected to usher in Utopia in short order. That was the premise, and the promise, under which the managerial class took power. With a century of hindsight, it’s increasingly clear that the premise was quite simply wrong and the promise was not kept.

Could it have been kept? Very few people seem to doubt that. The driving force behind the popularity of conspiracy culture these days is the conviction that we really could have the glossy high-tech Tomorrowland promised us by the media for all these years, if only some sinister cabal hadn’t gotten in the way. Exactly which sinister cabal might be frustrating the arrival of Utopia is of course a matter of ongoing dispute in the conspiracy scene; all the familiar contenders have their partisans, and new candidates get proposed all the time. Now that socialism is back in vogue in some corners of the internet, for that matter, the capitalist class has been dusted off and restored to its time-honored place in the rogues’ gallery.

There’s a fine irony in that last point, because socialist management was no more successful at bringing on the millennium than the capitalist version. Socialism, after all, is the extreme form of rule by the managerial aristocracy. It takes power claiming to place the means of production in the hands of the people, but in practice “the people” inevitably morphs into the government, and that amounts to cadres drawn from the managerial class, with their heads full of the latest fashionable ideology and not a single clue about how things work outside the rarefied realm of Hegelian dialectic. Out come the Five-Year Plans and all the other impedimenta of central planning…and the failures begin to mount up. Fast forward a lifetime or so and the Workers’ Paradise is coming apart at the seams.

A strong case can be made, in fact, that managerial socialism is one of the few systems of political economy that is even less successful at meeting human needs than the managerial corporatism currently staggering to its end here in the United States. (That’s why it folded first.) The differences between the two systems are admittedly not great: under managerial socialism, the people who control the political system also control the means of production, while under managerial corporatism, why, it’s the other way around. Thus I suggest it’s time to go deeper, and take a hard look at the core claim of both systems—the notion that some set of experts or other, whether the experts in question are corporate flacks or Party apparatchiks, can make society work better if only they have enough power and the rest of us shut up and do what we’re told.

That claim is more subtle and more problematic than it looks at first glance. To make sense of it, we’re going to have to talk about the kinds of knowledge we can have about the world.

The English language is unusually handicapped in understanding the point I want to make, because most languages have two distinct words for the kinds of knowledge we’ll be talking about, and English has only one word—“knowledge”—that has to do double duty for both of them. In French, for example, if you want to say that you know something, you have to ask yourself what kind of knowledge it is. Is it abstract knowledge based on an intellectual grasp of principles? Then the verb you use is connaître. Is it concrete knowledge based on experience? Then the verb you use is savoir. Colloquial English has tried to fill the gap by coining the phrases “book learning” and “know-how,” and we’ll use these for now.

The first point that needs to be made here is that these kinds of knowledge are anything but interchangeable. If you know about cooking, say, because you’ve read lots of books on the subject and can easily rattle off facts at the drop of a hat, you have book learning. If you know about cooking because you’ve done a lot of it and can whip up a tasty meal from just about anything, you have know-how. Those are both useful kinds of knowledge, but they’re useful in different contexts, and one doesn’t convert readily into the other. You can know lots of facts about cooking and still be unable to produce an edible meal, for example, and you can be good at cooking and still be unable to say a meaningful word about how you do it.

We can sum up the two kinds of knowledge we’re discussing in a simple way: book learning is abstract knowledge, and know-how is concrete knowledge.

Let’s take a moment to make sense of this. Each of us, in earliest infancy, encounters the world as a “buzzing, blooming confusion” of disconnected sensations, and our first and most demanding intellectual challenge is the process that Owen Barfield has termed “figuration”—the task of assembling those sensations into distinct, enduring objects. We take an oddly shaped spot of bright color, a smooth texture, a kinesthetic awareness of gripping and of a certain resistance to movement, a taste, and a sense of satisfaction, and assemble them into an object. It’s the object we will later call “bottle,” but we don’t have that connection between word and experience at first. That comes later, after we’ve mastered the challenge of figuration.

So the infant who can’t yet speak has already amassed a substantial body of know-how. It knows that this set of sensations corresponds to this object, which can be sucked on and will produce a stream of tasty milk; this other set corresponds to a different object, which can be shaken to produce an entertaining noise, and so on. When you see an infant looking out with that odd distracted look so many of them have, as though it’s thinking for all it’s worth, you’re not mistaken—that’s exactly what it’s doing. Only when it has mastered the art of figuration, and gotten a good basic body of know-how about its surroundings, can it get to work on the even more demanding task of learning how to handle abstractions.

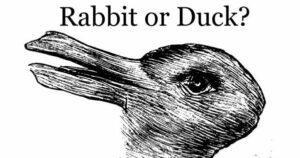

That process inevitably starts from the top down, with very broad abstractions covering vast numbers of different things. That’s why, at a certain stage in a baby’s growth, all four-legged animals are “goggie” or something of the same sort; later on, the broad abstractions break up, first into big chunks and then into smaller ones, until finally you’ve got a child with a good general vocabulary of abstractions. The process of figuration continues; in fact, it goes on throughout life. Most of us are good enough at it by the time of our earliest memories that we don’t even notice how quickly we do it. Only in special cases do we catch ourselves at it—certain optical illusions, for example, can be figurated in two competing ways, and consciously flipping back and forth between them lets us see the process at work.

All this makes the relationship between figurations and abstractions far more complex than it seems. Since each abstraction is a loosely defined category marked by a word, there are always gray areas and borderline cases, like those plants that are right on the line between trees and shrubs. The situation gets much more challenging, however, because abstractions aren’t objective realities. We don’t get handed them by the universe. We invent them to make sense of the figurations we experience, and that means our habits, biases, and individual and collective self-interest inevitably flow into them. That would be problematic even if figurations and abstractions stayed safely distinct from one another, but they don’t.

All this makes the relationship between figurations and abstractions far more complex than it seems. Since each abstraction is a loosely defined category marked by a word, there are always gray areas and borderline cases, like those plants that are right on the line between trees and shrubs. The situation gets much more challenging, however, because abstractions aren’t objective realities. We don’t get handed them by the universe. We invent them to make sense of the figurations we experience, and that means our habits, biases, and individual and collective self-interest inevitably flow into them. That would be problematic even if figurations and abstractions stayed safely distinct from one another, but they don’t.

Once a child learns to think in abstractions, the abstractions they learn begin to shape their figurations, so that the world they experience ends up defined by the categories they learn to think with. That’s one of the consequences of language—and it’s also one of the reasons why book learning, which consists entirely of abstractions, is at once so powerful and so dangerous: your ideology ends up imprinting itself on your experience of the world. There’s a further mental operation that can help you past that; it’s called reflection, and involves thinking about your thinking, but it’s hard work and very few people do much of it, and the only kind that’s popular in an abstraction-heavy society — the kind where you check your own abstractions against an approved set to make sure you don’t think any unapproved thoughts — just digs you in deeper. As a result, most people go through their lives never noticing that their worlds are being defined by an arbitrary set of categories with which they’ve been taught to think.

Here are some examples. Many languages have no word for “orange.” People who grow up speaking those languages see the lighter shades of what we call “orange” as shades of yellow, and the darker shades as shades of red. They don’t see the same world we do, since the abstractions they’ve learned to think with sort out their figurations in different ways. In some Native American languages, some colors are “wet” and others are “dry,” and people who grow up speaking those languages experience colors as being more or less humid; the rest of us don’t. Then there’s Chinook jargon, the old trade language of the Pacific Northwest, which was spoken by native peoples and immigrants alike until a century ago. In that language, there are only four colors: tkope, which means “white;” klale, which means “dark;” pil, which means red or orange or yellow or brightly colored; and spooh, which means “faded,” like sun-bleached wood or a pair of old blue jeans. Can you see a cherry and a lemon as being shades of the same color? If you’d grown up speaking Tsinuk wawa from earliest childhood, you would.

Those examples are harmless. Many other abstractions are not, because privilege and power are among the things that guide the shaping of abstract knowledge, and when education is controlled by a ruling class or a governmental bureaucracy, the abstractions people learn veer so far from experience that not even heroic efforts at figuration can bridge the gap. In the latter days of the Soviet Union, to return to an earlier example, the abstractions flew thick and fast, painting the glories of the Workers’ Paradise in gaudy colors, and insisting that any delays in the onward march of Soviet prosperity would soon be fixed by the skilled central planning of managerial cadres. Meanwhile, for the vast majority of Soviet citizens, life became a constant struggle with hopelessly dysfunctional bureaucratic systems, and waiting in long lines for basic necessities was an everyday fact of life.

None of that was accidental. The more tightly you focus your educational system on a set of approved abstractions, and the more inflexibly you assume that your ideology is more accurate than the facts, the more certain you can be that you will slam headfirst into one self-inflicted failure after another. The Soviet managerial aristocracy never grasped that, and so the burden of dealing with the gap between rhetoric and reality fell entirely on the rest of the population. That was why, when the final crisis came, the descendants of the people who stormed the Winter Palace in 1917, and rallied around the newborn Soviet state in the bitter civil war that followed, simply shrugged and let the whole thing come crashing down.

We’re arguably not far from similar scenes here in the United States, for the same reasons: the gap between rhetoric and reality gapes just as wide in Biden’s America as it did in Chernenko’s Soviet Union. When a ruling class puts more stress on using the right abstractions than on getting the right results, those who have to put up with the failures—i.e., the rest of us—withdraw their loyalty and their labor from the system, and sooner or later, down it comes.

In the meantime, as we all listen to the cracking noises coming up from the foundations of American society, I’d like to propose that we consider the possibility that the future cannot be managed, and that all those plans and programs and grand agendas are by definition on their way to the same dumpster as the Five-Year Plans of the Soviet Union and the various Wars on Abstract Nouns proclaimed by the United States. Coming up with a plan is easy; getting people to do anything about it is hard; getting future events to cooperate—well, you can do the math as well as I can. It’s already clear to anyone who’s paying attention that we’re not going to get the Tomorrowland future bandied about for so many years by the pundits and marketing flacks of the corporate state: the flying cars, spaceships, nuclear power plants, and the rest of it have all been tried and all turned out to be white elephants, hopelessly overpriced for the limited value they provide. Maybe it’s time to consider the possibility that no other grand plan will work any better.

Does that mean that we shouldn’t prepare for the future? Au contraire, it means that we can do a much better job of preparing for the futures. There’s not just one of them, you see. There never is. The same habit of bad science fiction writers that editors used to lampoon with the phrase “It was raining on Mongo that Monday”—really? The same weather, all over an entire planet?—pervades current notions of “the” future. Choose any year in the past and look at what happened over the next decare or two to different cities and countries and continents, and you’ll find that their futures were unevenly distributed: some got war and some got peace, growth in one place was matched by contraction in another, and the experience of any decade you care to name was radically different depending on where you experienced it. That’s one of the things that the managerial aristocracy, with its fixation on abstract knowledge, reliably misses.

We know some things about the range of possible futures ahead of us. We know that fossil fuels and other nonrenewable resources are going to be increasingly expensive and hard to get by historic standards; we know that the impact of decades of unrestricted atmospheric pollution will continue to destabilize the climate and drive unpredictable changes in rainfall and growing seasons; we know that the infrastructure of the industrial nations, which was built under the mistaken belief that there would always be plenty of cheap energy and resources, will keep on decaying into uselessness; we know that habits and lifestyles dependent on the extravagant energy and resource usage that was briefly possible in the late twentieth century are already past their pull date. These things are certain—but they don’t tell us that much. What technologies and social forms will replace the clanking anachronism of industrial society over the decades immediately ahead? We don’t know that, and indeed we can’t know it.

We can’t know it because the future is not an abstraction. It’s not something neat and manageable that can be plotted in advance by corporate functionaries and ordered for just-in-time delivery. It’s an unknown region, and our preconceptions about it are the most important obstacle in the way of seeing it for what it is. That is to say, if you’re setting out to explore unfamiliar territory, deciding in advance what you’re going to find there and marching off in a predetermined direction to find it is a great way to end up neck-deep in a swamp as the crocodiles close in.

If you want a less awkward end to your great adventure, try heading into the unknown with eyes and ears open wide, pay attention to what’s happening around you even (or especially) when it contradicts your beliefs and presuppositions, and choose your path based on what you find rather than what you think has to be there. Choose your tools and traveling gear so that it can cope with as many things as possible, and when you pick your companions, remember that know-how is much more useful than book learning. That way you can travel light, meet the unexpected with some degree of grace, and have a better chance of finding a destination that’s worth reaching.

I think were coming to the acceptance phase.

https://dailycaller.com/2022/01/06/poll-trafalgar-america-decline/

@ JMG – Wonderful essay, and quite timely. While there’s a longer version to this question, let me put the short version to you, and we can proceed from there; once the Tamanous culture really starts to take off, do you think there will still be people longing for a return to industrial civilization, or will the attractiveness of the new culture, finally put Tomorrowland-nostalgia to bed?

In this week’s Torah Portion Jews learn about Jethro, the Midianite high priest who explores every deity in the universe before he commits to follow God. In a negative sense, this could be seen as the ultimate accession to order, but it might also be understood as a metaphor for what you seem to be saying, Jethro gives up his marriage to any particular path and commits to embracing the unknowable greatness of the universe. “Isms” have been passe’ for about three thousand years, I guess.

Brilliant essay….thanks so much

I’ve recently read The Retro Future and The King in Orange. The question of the future often comes to mind. The answers are always an unsatisfactory “be prepared”.

Another forum I frequent has started studying Gurdjieff. Do you know his work?

What do you think of the types of organisation and management used by libertarian socialists and anarchists, to some extent early on in Russia, but especially in the Spanish Revolution?

Hi JMG

That was a brilliant essay. I am so glad my family has had the benefit of TDAR. Indeed, I bought the collected essays and read a bit every day. I feel we are very well placed for whatever future is coming, thanks to you and J.H. Kunstler.

We have a snug little farm that we work, mostly, by hand. The sheep are bred and will be milked over the summer and fall and I will make cheese from their delicious milk as I have done for years now. Our huge vegetable garden is at the mature stage with good soil and the rabbits have never bred so well. We have tons of compost and manure. I have got the Garden Club members here growing their own seeds and pooling them for years now. The seed is usually excellent.

We have great friends who are also pretty well prepared for the future with a broad range of skills and a firm sense of facing the future with mutual support.

I just had the Survival Medicine Handbook by Joseph Alton MD and Amy Alton APRN sent to me. It looks quite useful. Things are going distinctly odd in Canada.

Much love and hugs from Maxine

It’s also important for those of us aware of and expecting collapse to remember we don’t know details, especially timing and location of downturns. Things can still take you by surprise.

And occasionally you get darkly hilarious situations like the following: walking along talking to my lone collapse aware family member, I complained that sometimes it was really hard to keep walking the path of less, and putting all that energy in, and choosing to forego things I could have had or done, when everyone around me wasn’t even trying and it felt like the future was being very much delayed. Part of me kind of wished it would just get on with it.

That was late December 2019. As of next march, I wasn’t complaining about the work I’d put into making myself more collapse-resilient!

How i personally differentiate knowledge in the two categories by refering matters as matters of intelligence or wisdom.

Honestly the biggest failures in society and a common train if thought through our bloody history is the idea that one can measure people using a universal objective standard. Ive literally just saw some fall out of someone who believed that ones IQ or stricter survalence and data mining could be used to determine people’s needs better…but what are people’s needs if those needs, people, and situations are unpredictable?

Its frustraiting, almost infuriating, because everywhere I go, that micromanagement of my actions, my beliefs, even my identity, is being utilized not just against myself but against other people. Like Socrates in Plato’s Apology, people are being pushed into this corner where nothing could be done but try and defend ourselves against public opinion and despite our efforts and despite truth coming out, we are condemned to death.

A great essay. It reminds me of a book I recently read, “The Cubans”. The story of Cuba since Castro took over is a complex one. The state still relentlessly pushes that ‘the revolution’ will continue and bring the workers paradise of the future. Very few Cubans believe it anymore, and most of the world is in some level of disbelief that the current post Castro regime has managed to hold on. We, on the outside see a governing body past it’s pull date and are watching with curiosity; what will happen now?

Yet we(in general) are blind to the inevitable change coming our way. Given the current political climate in the United States, I wouldn’t be surprised to see the Cuban way of life shined up for importation and used as a political plank for the left. They are green and ecologically sustainable, if you define eco friendly to include occasional starvation and farm animals in the family bathroom.

I wonder how long it is going to take for industrial civilization to get the arrogance knocked out of it. And what we’ll be left with after people admit that tomorrowland will never come.

That duck-rabbit must be swimming and hopping around in the collective unconscious lately. I used the same example in an essay I wrote a few days ago, that I still haven’t published, to make a similar point.

It seems like figuration is something we do when we understand that we know very little, while we tend to Tlonify things when we’re sure we know a lot. If Borges’ Orbis Tertius exists, it’s a gaggle of unconscious participants who initiated into the society by having first convinced themselves.

The good news is that a few PMC folks in my circle are beginning to notice the abstractions failing to line up with the territory, and though it frightens and confuses them, at least they are starting to wonder. Your advice to collapse now and avoid the rush could apply equally well to our worn out abstractions. Those who give them up and begin the painful work of refiguring the way they think about the world will fare better in the long run.

The uncertainty certainly bodes problematic for those of us in search of new careers amidst The Great Resignation. JMG, if you were 25 years old abandoning a career inappropriate for the times ahead, what would you consider as an alternative? (Can’t pick writing!) I’m struggling with this exploration especially taking into account the importance of the ‘Parallel Polis’—an enigmatic collective abstraction!—and our new careers within that society. Would love to know your (or any fellow tribesman’s) thoughts! Thank you.

Ryan

We need to plan for chaos. We need to design to design for flexibility and anti-fragility (thank you, Nassim Taleb).

Being a child raised by a blue collar tech worker and a biologist turned librarian, I remember well my first few lessons in economics, and their more practical application to business and supply chains. Everything needed to be super specialized, everything needed to be ‘just in time’.

In my mind I could already see the ecosystem equivalent of the thing: a rainforest, running riot with complexity, all calories accounted for, with nothing to spare. I could see the technological equivalent, an ultra complicated machine, running full tilt without backups or redundancy.

Naively, I would question my esteemed tutors: “What happens if this breaks? What about having backup?”

The patronizing response?: “We call that waste.”

Lesson learned, I guess. This is the type of foolish thinking you get when you let the abstractions (useful things though they are) start to blind you to reality.

John–

Reflecting on the process of formation, I was trying to figure out the value of breaking down the structures we’ve developed to give meaning to the universe. The thought popped in my head: “Without these abstractions, all would be formless and void.” And I suddenly saw Gen 1 in a very different light!

The things I’m most concerned about for the next year or so for society at large are:

-food price rises, food shortages, and food supply chain snafus

-other shortages and supply chain issues especially energy and metals

-assorted things related to covid, the response to covid, and the response to the response

-housing price rises or crashes

-insurgency, civil and inter-state war

-climate-change driven extreme weather events

-very high/hyperinflation, financial crash etc

-something out of the blue I haven’t considered that will slap us upside the head when we least expect it

It feels like there are a lot of serious downside risks this year.

What is your view on india , jmg ?

A sailor trims the sheets for the wind that is, not the wind he wishes it would be!

I just had a conversation yesterday about the relationship between resilience and expectations.I think those are also closely related to this search for and identification of the future. What we can interact with is perhaps determined by what we can acknowledge and allow to be possible. Remember the dwarves at the very end of C. S. Lewis’s “Final Battle”? So if you can’t make room in your imagination for decline, then all the signs of decline must be outliers… anomalies… not reliable data points.

Easily one of your best posts. Thank you.

I feel there’s a metaphor in here somewhere about empty promises and abstract bureacracy, in the Wreck of the English Bay Barge (riffing Gordon Lightfoot’s Wreck of the Edmund Fitzgerald)

“The bay, so they say, is a peril to sail

When the winds of November start whooshin’

If fully weighed down, might have sailed through Howe Sound

But the English Bay Barge, it was empty

Before the uproar, it was anchored offshore

When the gales of November came early

…

As the big barges go, it was bigger than most

With no crew when the northwinds were blowin’

The weather reports warned the cities and ports

Of a big bad atmospheric river

The floodgates of heaven turned up to eleven

And the northwinds are going to give ‘er”

And the people made an official joke of it: https://bc.ctvnews.ca/mobile/barge-chilling-beach-sign-erected-as-vessel-remains-resting-on-vancouver-shoreline-1.5708961

Left it rave reviews: https://bc.ctvnews.ca/mobile/barge-chilling-park-vancouverites-posting-online-reviews-of-the-grounded-barge-on-sunset-beach-1.5693585

“”Much more than we could have ever asked for, just stunning,” one user writes. “We visited the Barge the other evening and regret not doing so sooner. The whole experience was beyond majestic, you walk around the seawall corner, and boom, there she is in her full glory.””

And it is still there, as the company that owns it tried to move it twice by simply tugging it out, couldn’t, and now have no idea what to do. The longer it sits, the more people have opinions on how it must be done:

“”Many problems like this, Chan argued, comes down to a lack of transparency with environmental data.

“We’re just left completely in the dark,” said Chan, adding that Transport Canada has a lot of problems to solve in B.C. “It’s amazing how long governments can let crap like this go on. If it’s not killing anybody, it’s the kind of thing they can ignore for years and years.””

(this guy is full of crap, there is no fuel on a barge, this one was empty, and rust isn’t toxic)

And of course :

“On Twitter, members of the Indigenous community explained why the park board’s sign isn’t amusing for everyone.

“This barge sign was a fun joke but the work of decolonizing isn’t fun, it’s been harmful to be Indigenous to Vancouver yet utterly erased,” Ginger Gosnell-Myers, a fellow with SFU’s Urban Indigenous Policy/Planning, wrote after the first graffiti incident.”

(there was no sign there before, so no one was erased, they could have just requested an addition now that the is one)

It has fallen on the government regulator to give them new criteria of what plans are required to move it:

“The removal is a complex issue, with several factors to consider in addition to tide levels, including safety, security and environmental protection,” Transport Canada’s Sau Sau Liu wrote in an email to CTV News.”

And my favourite is, if you look at the pictures, there is a tiny chain attached to a tiny rock to anchor it. People thought it was another joke, but it’s actually the legally Transport Canada required anchor (to make sure it won’t drift away again, naturally).

Thinking about what you know at a young age, I’ve got a couple of unusual ones.

They say it’s impossible to catch yourself falling asleep, but I did it once spontaneously happen when I was about five years old. I was laying in bed and recognised a certain sensation and thought “I’m about to fall asleep.” I actually did and the next thing I knew I was waking up. It’s never happened since and I’ve never tried to make it happen again, but that one time was a very interesting experience.

When I was six I was walking along the road to infant school with my grandma. I thought “I’ve walked along here a lot of times.” And somehow realised “I have memory now!” Obviously I’d had memory before but somehow becoming aware of it in that way was a radical change in my sense of self.

Off topic, but I’d like to ask everyone for prayers and positve energy for my mum. She’s had a series of health issues over a short time. Including a fall into the sharp edge of a greenhouse that was minor but if it had been slightly different could have cut an artery, a really nasty and persistent cold, a severe stomach and gut upset, and now hip/knee pain. I think I may be at least partially responsible because of how badly I responded to my own recent health problems. I’m trying to undo the destructive thoughts but anyone who wants to help may have to get through some of my negative influence first.

Thanks JMG for the important messages in your post. I especially tend towards practicing being present to what is around me, and what is happening locally. It is a practice because I have a very good abstract sensibility that is seldom accurate. Like I estimate that that project of fixing up the greenhouse will cost “X” and will take “Y” time. Many times it takes much more of both. I’ve been concentrating on building up our infrastructure [garden beds, hand tools, sheep flock, wood burning appliances for examples] using what fossil fuel I have at my disposal, as well as the paid and donated physical help of others. One future is that we will be self sufficient enough to survive the ups and downs of the supply chain by growing our own vegges and either freezing them or putting them up in mason jars that do not require refrigeration. The possible scenarios you depict in your writings of the slow decline and the reality of what that means on the ground as concerns socety and my family, resonate. I’m blessed with a big family, lots of know how, and also lots of book learning both practical and through others, spiritual. Those plans and efforts do not ensure that my futures are secure. I get that, and hope that others do likewise. I am along for the ride! Ah, Lakeland Territory, where art thou?

Such a fantastic essay (as always). These days, so many things (such as this essay), trigger my recollection of the astute (and droll) observation of the late American sage, yogi Berra: “The future ain’t what it used to be.” Thank you for all your wonderful posts both here and on your dreamwidth site.

Thank you Mr. Greer for another great article. I would like to run two things by you.

First, you probably are already aware of this, but for whatever its worth when you get the chance look at the impact that Scottish common sense moral epistemology had on the formation of America. The basic idea behind Thomas Reid and other thinkers from his school of thought is that knowledge is spread out across the common people and that they often know better than the abstract intellectual. This produces a political system where the experts have to justify themselves to the masses, not the other way around. I think when America has been successful it has stayed true to this kind of Declaration of Independence style epistemology rather than the elitism that is now failing. At some point in your life read Mark Noll’s “America’s God”, which is the best book on how this style of philosophy shaped our unique take on religious expression. I’m sure you’d get a lot out of it.

Second, I have to tell you the material you cover here is basically the story of my life. I am a trained academic philosopher who could not find work in the academy, and almost certainly never will. So now I work bending steel in a factory. The transition to blue collar work over the last several years has been humbling, because I had to learn the hard way that so called “unskilled” labor is actually quite skilled. The ability to stand on concrete for 40 to 50 hours a week requires your body to adjust and you to learn how to pace yourself. The ability to remain focused on what is directly in front of you is essential in a factory; if I get distracted while running one of my machines I could easily loose a finger or even a hand. Others are just flat out better at learning this material than I am. They learn it faster and retain it longer. I have to force myself to conform to a new state of affairs’ and all my years of abstract training is of little value in the moment to moment progression of things. I hope that when all is said and done I can have a foot in either door and be skilled at both types of thinking.

One of the things that used to drive me crazy when I was young was my mother cooking and when answering a question from me about preparation, the usual response was ‘until it looks right’. She couldn’t verbalize much about the process, only demonstrate it. Now that’s how I cook. Until it ‘looks right’.

How will we know when we have a workable future? Well, when it ‘looks right’. And there’s no way to put that into words really.

What, Jeff’s cockrocket isn’t going to save us?! I want my money back!

The only thing I can be certain about is that the future is going to be a bit more s***. I guess it is like walking across a frozen lake: what appears solid might not be, so best test each step and the way across sure ain’t a straight line.

What to actually do, though? The conclusion of The Black Swan said it for me: spread your bets, play around with different ideas, don’t hold too tightly.

JMG,

just a note about conspiracies.

There are people (I, for example) that believe that conspiracies are much more common than people expect.

At the same time, ALL the conspiracies I looked into have no grand plan, they are all done for the basest of reasons: money, eliminating a competitor for power or simply coverup a previous mistake (or conspiracy).

It’s just depressing – it reminds me of the long series of struggles, civil wars and assassinations that accompanied the final years of the Western Roman empire. People conspired, killed and died just so they can get the last scraps at the imperial table.

Dr. Malone was puzzled. They were the most educated people, those Germans and they went barking mad. Perhaps they went barking mad BECAUSE they were most educated to begin with? Such a thought never seemed to have crossed his mind but it definitely seems to have crossed yours. Perhaps people are going barking mad now, because they are so edumacated?

>Choose your tools and traveling gear so that it can cope with as many things as possible

In most eras, being THE BEST at one thing was the way to go. Do one thing, do it better, do it the best. I would claim in this era, that’s a recipe for failure. You want to be just good enough at many different things as you can, because you will probably be the only one around who can do something about it. This is the era of the jack-of-all-trades.

On futures and trees

Futures are open – and that is very good in more than one way, in my opinion.

I can imagine future like a living tree; some growing movements of the trunk, branches, twigs, unfolding of leaves can be – to a degree – predicted, based on some knowledge of growing trees. But they cannot be planned – except, perhaps, by the tree itself – to a degree, though. Not even the tree itself can predict all of the external influences of wind, changing temperature, insects…

However, people sometimes want to interpret things in the way they were taught in the childhood and one of the problems is, of course, when they forget what interpretation is and confuse it with the only possible truth. “Look at the tree,” they say “it’s green!” “Why,” say others “it’s brown!” (And they are all looking from a similar perspective, use the same language, at the same daytime…only at different angles: some see the leaves, others see the bark.) In the case of a tree the conflict might sadly end in cutting down the tree to explore what colour, texture, etc. it really is. But all this longing for the only possible and certain truth is probably driven by the human need for safety and unity and certainty – only wrongly and also badly translated from the psychological realm to the outer world!

I think that the openness of futures, even if they thus inevitably bring a lot of unpredictability and uncertainty is a good thing; it is good, too, that we cannot hew down futures as we can trees…or, can’t we? Cutting down a tree is hewing down one particular future for our world, so we should be very careful there, too….

Thank you, JMG, for a very inspirational essay!

With deep regards,

Markéta

Great post John. The last paragraph is gold. Especially, choosing who you travel with.

Dashui, I’m delighted to see this. When you hit middle age and realize that your youth is over with, if you accept that and adjust your habits to prioritize staying healthy, fit, and mentally flexible, you can count on a much better old age than if you plunge into denial and go around pretending that you’re still 18. (You also get laughed at less.) If the American people have the same sort of realization about their country, recognize that its age of empire is over and done with, and get to work fixing as many as possible of the problems that have gone unaddressed while our government’s been busy playing global policeman, this nation could also have a healthy old age.

Ben, if the traditional prediction is correct, we’re five centuries or so from the dawning of the future American great culture, and quite a bit further than that from the point at which it becomes self-aware enough to think of itself as something different from other cultures. What attitude people then will have toward the failed industrial societies of the distant past is anyone’s guess.

Geoff, interesting! I hadn’t encountered that tradition about Jethro before. Yes, it makes a workable metaphor.

Kristin, you’re welcome and thank you.

Piper, oh, it can be a little more detailed than that. I’d say focus on resilience, don’t clutch your own ideas too tightly, and remember that the corporate media is telling you one set of blatant lies and the people who insist they’re trying to change the system are telling you another set. As for Gurdjieff, yes, I’ve read some of his books (and more of Ouspensky’s) — it’s not my path, but I know people who seem to have gotten a lot out of it.

Yorkshire, there are a wide range of alternative options, and those are among them. Myself, I’m impressed by democratic syndicalism — I’ve done business with a lot of worker co-ops in which the people who do the job collectively make the decisions, and found them reliably better run than management-heavy corporations — but there’s plenty of room for experimentation.

Maxine, thank you for this. I’m very glad whenever I hear from anyone who’s listened and made appropriate preparations.

Pygmycory, oof! The universe does have a mordant sense of humor…

Copper, exactly. That’s the fatal flaw in the managerial vision: the conviction that people can be reduced to some simple quantitative measurement, and treated accordingly. The results are not good.

Dave T., there was already a sustained attempt to market the Cuban model in the US — did you see the frankly bootlicking video The Power of Community, which praised the Cuban response to the fall of the Soviet Union as a great model for dealing with peak oil? If you mentioned that there was a factor the video didn’t discuss — that Cuba is, ahem, a dictatorship — you got a very frosty response from the folks who were pushing it. It was pretty clear to me that they fancied themselves as members of a future US Politburo, handing down edicts to everyone else, and rounding up those who didn’t cooperate for internment in labor camps.

Pygmycory, it may take a good long time. My working guess is that a couple of hundred years from now you’ll still have people insisting we could have gone to the stars, blah blah blah.

Kyle, excellent! Yes, collapsing now begins with the collapse of failed abstractions. It’s painful, but it hurts less than having the floor drop out from under you without warning.

Ryan, I’d sort through my skills and interests, and find one that creates goods and/or services that actual human beings need or want. The careers that are going to implode most messily are those that feed the corporate machine, while people will find ways to pay for the things they themselves use.

Andrew001, that’s a great example. “We call that waste” means “in our abstraction, which is hopelessly detached from reality, we’re going to pretend that nothing ever goes wrong and therefore no backup is needed.” Such people should probably be condemned to fly in airplanes that are designed so that they are only strong enough to withstand the average amount of turbulence.

David BTL, good! “And God said, let there be abstractions.”

Pygmycory, I’m not going to argue with that at all.

Aaron, er, I have no idea how to answer this question. (Remember that I have Aspergers syndrome and thus have no notion what nonverbal signals you think you’re sending.) My view about India in what context? Politics? Economics? Geology? Demographic trends? History of philosophy? The differences between Indian and Western classical music, with special attention to the distinction between tonality and dronal harmony? Please tell me in so many words what you’re asking, or I have no choice but to shrug and go on.

Woods Hippie, an excellent summary!

Michelle, that’s a very important point, and one I plan on addressing in a post soon. The neglect of the imagination is one of the major blind spots in our current notions of education.

Bruno, you’re welcome and thank you.

Pixelated, I heard about the barge, and also the beach that’s been named after it. You seem to have started a trend up there in BC; Florence, OR just officially named Exploding Whale Memorial Beach, after one of the great incidents in the city’s history.

Yorkshire, thanks for these! Positive energy en route for your mum.

Lawrence, the Lakeland Republic is a little like Plato’s republic, in that it exists wholly in the realm of the imagination. Could something of the sort come into being down the road? Depends on how many people buckle down to do something constructive about the challenges facing them.

John, thanks for this.

Stephen, thanks for this; I’ll definitely check that out. As for your current situation, it’s heartening that you rose to the challenge and outgrew the less helpful aspects of your training! Have you considered writing a book about your experience? You’d have to unlearn everything you were taught about writing as an academic — the whole point of academic prose, after all, is to make information inaccessible to anyone who doesn’t have a Ph.D. in the relevant field — but if you can do that, I think the results would be well worth reading.

Jeanne, that’s a great example. Here’s to a future that looks right!

Benn, good luck getting your money back. The whole point of Bezos’ phallorocket — well, other than displaying just how obsessed he appears to be about his own masculine inadequacy — is to con you into forking over money to his various sleazy gimmicks under the delusion that they have anything at all to do with the future.

NomadicBeer, of course conspiracies exist. I’ve written a book about them, you know! What most people still don’t get is that conspiracies are a tactic of weakness; you organize in secret when you don’t have the option of doing things right out in the open. The fact that there are things going on in secret is a very good sign that the people involved know just how little real power they actually have.

Owen, exactly. Education is a two-edged sword, and when it turns into ideological indoctrination — no matter what ideology we’re talking about — the result is an acquired stupidity far more resistant to learning than the natural kind. Combine that with a system that shelters elites from the consequences of their mistakes, like the one we have in the US today, and you can count on having a system stumbling from one disaster to another, making the kind of mistakes that an ordinary moron would reject as just plain dumb.

Markéta, thanks for this — that’s an extremely useful metaphor.

DenG, thank you.

It seems we have already had a good lesson about how unpredictable the future can be. Over the past year and a half, I’ve experienced several times talking to someone, and they say, with a look of bewilderment and sadness on their face, “I never thought I’d see something like this in my lifetime”, or, “I never thought I’d be worried about tyranny in the USA in my lifetime”, or “Who would have thought, just a year ago, that our government would be forcing people to take experimental vaccines, and threatening them if they didn’t?”etc. Yet here we are, and quite quickly. Do you think that this experience will help people be more flexible in their expectations of the future? Or will most people just cling to their preferred outcome?

I made the mistake a couple of months ago telling a woman about the Riot for Austerity project I participated in back in 2008-2010. She had dumped into the conversation “we are all going to die in 5 years from climate change unless the government does something.” I decided to mess with her NPC script and share what the project was about and how we each came up creative solutions to lose less energy, grow food, etc. When I stopped, she looked at me with a totally straight face and said, “It’s not what we do as individuals, it what the government does that matters.”

What clicked into place for me then is that the majority of people are waiting for an expert or authority figure to direct everyone to The One Right Answer.™ It will be communicated and metrics put in place to assess compliance to TORA. Of course The Good People™ will be leaders and show their utmost support. It sounds a lot like a school classroom, doesn’t it? I think its no mistake schools are designed the way they are to support the habitual thinking needed to support the managerial class.

In this awful world this woman wants, we all suffer our lot together and no one can deviate from the plan. Its no wonder people brag about their drinking and governments want more legalized pot everywhere. It makes living more bearable for them.

This essay is a good reminder to just get cracking on breaking down that terrible narrative created by the media and do useful things. Ignore the haters and the losers. They will wail and whine at people while waiting for The One Right Answer™ to come so they can follow it.

It seems to me that as global techno-industrial society breaks down, in addition to creating or expanding local networks and organizations, it may be helpful and important to join or start a local chapter of an existing traditional fraternal organizations like the Freemasons, Oddfellows, etc. Most of these organizations are very secretive, with information being revealed only after joining and as a member gets promoted up the chain, making it extremely difficult to know what the organization’s true nature or purpose actually is. Many of them have affiliations, branches and related orders that may or may not still be interested in the common good.

My wife’s grandfather was a Freemason, and her grandmother was in the Eastern Star (both have passed). That is the extent of any familial relation I have to these organizations, as my own family had no affiliation with any. I have done some research and am confused by all of the conflicting information and opinions out there about them (and internet research on just any topic is growing increasingly unreliable). I am not even sure what other organizations – besides the Masonic lodges I sometimes pass by – still exist in northern New England. It appears that some of these organizations started out with benevolent intentions, and either still remain so or have slipped to the dark side (so to speak), while some were set up for nefarious purposes altogether.

I guess my questions are: Do you expect membership in such organizations to grow in the near future?

Which of the organizations are still in existence and viable in the USA? How does one discern which existing fraternal organizations (or their branches/orders) still have a positive focus and intention, and which have been coopted by people with evil intent? Do you have any recommendations of which organizations and orders are benevolent and beneficial to explore, and which have been taken over by dark forces or should be avoided altogether? Maybe this topic is the stuff of a future JMG essay. Thank you again for all of your great work.

John,

Continue to be amazed how to bring such clarity and useful commentary to us every week. i share it a lot, with the hope of course that at least a few will pay attention

The whole abstraction thing was always summarised so wonderfully in Zen

“Talk does not Cook the rice.”

@Pixellated

I hadn’t noticed the anchor and chain, though I was familiar with the rest of the story. The whole thing is a little farcical, and a legally-required mini anchor for a stuck barge is just icing.

LOUD APPLAUSE for your rundown of our predicament and the historical failures to do better. I wish that I could write as well!

But I believe that I see a logical and effective remedy for the historical problems you describe. All of the failures you cite are based on mental defects long since built into us by social leaders. Which we now have evidence can be avoided. Actions that I have personally experienced due to loving parents plus odd coincidences. For immediately checkable facts, see: https://en.wikipedia.org/wiki/Boris_Sidis and https://en.wikipedia.org/wiki/William_Sidis. And other writings by Boris that are available online, such as https://web.archive.org/web/20070909192551/http://www.sidis.net/philistine_and_genius_appendix.htm

Over a century ago, Sidis, a professional psychologist (and jailed by his native Russia for violating their “keep-em-stupid” rules and teaching commoners to read), emphasized that we crippled our children by misusing their early years. Evolution has naturally made swift early learning vital for all organisms with brains, so both physical brain growth and its organization are at their peak in the earliest times. So I say forget the “preschool age” idea that has long forced us to think that kids need to be past those best years before being handed over to professionals to “learn” – really to be indoctrinated.

Instead, I learned to read well by age 3, just from my mother pointing to the words as she read them in the usual kid stories and books that I loved then, and also explaining about letters and how their sounds worked to form the words and sentences. There’s much more to be said, but the other BIG point I see as highest priority is that systems thinking came naturally, decades before I ever heard the term. Connecting the scenes and facts in everything I read and/or experienced was a natural drive, producing a unique pleasure as I formed a worldview. Which gave me a 160 IQ when entering 1st grade and being skipped into 2nd. One unified worldview, unlike the brain-wasting several we are usually forced into. With zero coaching, I saw the difference between National Geographic articles and the kid (and adult) stories I used to value too highly. So here I am at age 76, still learning from excellent sources like Ecosophia, and trying to spread the best idea I have to offer.

It may be of interest here what Professor Mattias Desmet (the one Dr. Robert Malone recommended because of his theory about mass formation) mentioned in

https://seemorerocks.is/headwind-part-2-prof-mattias-desmet-msc-phd-clinical-psychologist/

between 22:00 and 26:00:

Since more than 20 years we do have serious problems with the quality of science and the connection between the results measured and the conclusions drawn from this. It is estimated that at least 73 % of all published papers in medicine are completely wrong.

He mentions especially the following articles Reproducibility: A tragedy of errors in Nature, 2016 ( https://www.nature.com/articles/530027a ) and Why Most Published Research Findings Are False, John P. A. Ioannidis, in Plos Medicine 2005.

Thus the managerial class based its management of the corona crisis and the vaccination program on the conclusions of “studies” from which at least 73 % are completely wrong.

Another point is that Professor Joseph Tainter mentioned in “The Collapse of Complex Societies” and in “Drilling down: The Gulf Oil Debacle and Our Energy Dilemma” that the Eastern Roman Empires evades a collapse in the 7th century by dramatically reducing its complexity. It seems they fired most of their bureaucrats and managerial class. The pandemic demonstrated the uselessness of many institutions and of large parts of our complexity. Thus we learned that we can reduce our complexity and can close down some institutions without regret.

@JMG

An article about how neoclassical economics is hopelessly detached from reality.

https://www.scientificamerican.com/article/the-economist-has-no-clothes/

@JMG

An interesting article about Autism

https://www.salon.com/2014/09/01/we_might_have_autism_backwards_what_broken_mirror_and_broken_mentalizing_theories_could_have_wrong/

(I also have Asperger’s syndrome)

The insight, when it came, was almost blinding. The third stone from the sun, Earth, belonged to the state of Uqbar. But, the state of governance, which was Uqbar, had been usurped by the Echthroi.

Uqbar is the harmonic state of planetary governance. Uqbar is a state of mind

cobo

That English Bay Barge song is great. If filthy Americans are allowed in, I’ll go look at it sometime soon.

Dig this article from RESCUE. https://rescue.substack.com/p/chilling-pandemic-data-from-the-insurance

“A major Indiana-based insurance company reports unprecedented 40 percent death rate increases industry-wide among working-age Americans in 2021 compared to to pre-pandemic data.” The insurance data is for people who are employed and thus have decent access to health care. CDC data also shows a sharp rise in death rate in the 18-64 age group far beyond what is accounted for directly by Covid. 252,000 deaths overall, including 50,600 where Covid was the cause or contributing factor.

The article does not speculate on causes. Clearly, the US working class is facing stresses. That “future” we should have prepared for is here.

Of course, as you say, 20th century style corporate capitalism, and communism (as implemented in Eastern Europe rather the ideal Communism, i.e. basically the same as the first but all owned by the state) exhibit flaws where abstractions diverge from reality.

Contemporary neo-liberal capitalism, where instead of everyone working for the Corporation, the workers on the ground are actually working for an outsourced subcontractor, and only a few managers actually work for the Corporation, could turn this into overdrive, because the people in management don’t even work for the same organisation as those who will actually do the work. Therefore the managers can be tempted to only deal with abstractions, and obscure their thinking by talking in management-speak peppered with buzzwords, so that it is very hard to connect their pronouncements to actual reality.

Dear John Michael,

I realize that it was not the main thrust of your essay here, but I couldn’t help but smile to myself when you explained the difference between the two different forms of the verb “to know” in languages other than English, which we English speakers roll into one.

This reminds me of the similar difference in words, and concepts, of the English verb “can” (as in “to be able”), and of a humorous incident I had years ago with a shop keeper in La Paz, Bolivia.

Looking at some musical instruments in her shop which intrigued me (being that they, “charangos”, were made with the shell of an armadillo), the shopkeeper encouraged me to pick one up and try it out. However, I had no experience playing any musical instrument but the piano, and not wanting to look like an idiot, I politely told her “Lo siento, pero no puedo tocarlo” (literally, “I’m sorry, but I cannot play it”). She immediately looked down at my hands in surprise, at which point I realized that what I had said in Spanish came out not as “I can’t play it”, as we would commonly say it in English, but as “I am UNABLE to play it”. I then realized that I should have used one of the two forms of the verb “to know”, conocer, meaning “to know how to”, or “to be familiar with”, instead.

Even so, it was not as embarrassing an episode as when I accidentally asked our guide on a Bolivian jungle trip, as a particularly beautiful butterfly (“mariposa”) went by, “What kind of homosexual (“maricon”) is that?”, instead.

JMG, thanks for a refreshing dose of reality as usual!

Ryan,

I have a friend with a small farm whose (rather new and otherwise reliable) tractor has been out of commission for nearly a year because a computer chip has failed in the instrument panel. There is still no date for when a replacement will be available. The future will have a great demand for folks who are able to creatively make things work, by fabricating parts, bypassing unnecessary complexity, repurposing salvage components, etc. No idea of that’s your cup of tea but it’s what is on my mind today.

Sort of relevant, re: rule of “experts.” Vague memory here: read about LBJ bringing in a bunch of “the best and the brightest”. Or maybe one of LBJ’s lieutenants. LBJ or lieutenant was very excited about the project. A savvy politico sighed and said something like, “I wish one of them had at least run for sheriff once.”

Ryan Tiefen @ 13 My thoughts on the question you asked, for what they might be worth.

First, are you sure the career you planned or might be abandoning is useless? There is a crying need for virtuous, honest, diligent professionals at every level. Be the engineer who can design a functioning for the next 300 years sewer system instead of the glamour projects. If law is your passion, be the capable and diligent, (and trusted!) public defender. Be the MD who does house calls and doesn’t disdain herbal medications. How you go about finding useful training, there I can’t help you.

Hands on occupations for which there is need right now today include: sharpening of scissors, garden tools, knives etc. If you can sharpen my sewing scissors, I am your new best friend for life. In fact honest handymen of all kinds are making good livings as their own bosses. Revitalize and refurbish just about anything from small engines to houses to old cars. I love the old style toasters in which the sides open, but they need rewired. As do the excellent mid 20thC irons, and the mixers from that era could use a good over hall as well. I would far rather buy my grandkid a midcentury Sunbeam, cleaned and rewired by a professional restorer than shell out for one of those overpriced monsters that go for $200. and up.

If you are into gardening in any way, two low upfront investment business opportunities might be 1. all natural yard care–no blow dryers, compost piles maintained for the customer, no avocado theft from the client’s trees, hire college students and make sure they do their bathrooming before they go to work. This would be upscale for people willing to pay. and 2. heirloom and organic nursery stock. There is ever increasing interest in vegetable gardening, but not everyone wants to start seeds themselves. I have seen many times that heirloom and unusual varieties sell out at farmer’s markets at once. Set yourself apart by doing research and being able to answer questions. I once asked the variety name of a potted rose at a flea market. “What do you care what the name is? It’s a rose.” growled the seller. Sure, there are likely 5-10 nurseries near where you live, and they all buy from the same wholesale distributor and offer the same selection.

Hi John,

I believe the impacts of the future going forward will increasingly resemble that of a series of natural disasters with cumulative and lasting damage. Or more pessimistically, like continual warfare of people, places and things blown to smithereens. Having got a minor taste of real-life disaster response (most notably the Nothridge earthquake back in ’94), I’m willing to hang my hat on three general guidelines:

1) “No battle plan survives its first contact with the enemy.”–Clausewitz (I think) And here the “enemy” includes not only the things unexpectedly destroyed, but the unexpected reactions of people. The fall of Western Rome was jumping off a ladder compared to the fall of modernity, which is looking more like jumping of a cliff.

2) “The Plan is nothing; planning is everything.”–Dwight D. Eisenhower. The ability to quickly revise your response to actual events depends on detailed knowledge of your surroundings: terrain, people, resources, etc. Crafting a plan(s) is just a means to that end.

3) “Not every problem can be solved, but every problem can be decided.”–(author unknown) For example, you have the fire fighting resources (what’s left of them) to rescue group A on the west side of town, or group B on the east side of town, but not both. Something tells me the future will have a lot more deciding than solving.

One more general comment: The more complex, interdependent and specialized a society becomes, the greater the level of trust needed to make it work. (Oppressive oligarchies ultimately depend on regions of the world where there’s sufficient trust to produce the goodies.) But the high level of affluence that results from earlier eras of discipline and resource abundance, makes it easy for later generations to think that pace Kunstler, “anything goes and nothing matters.” But this attitude slowly at first, then quickly, erodes trust at the very time (resource shortages) that trust would be most needed. And so one faces a shortage of societal capital at the same time as the shortage of physical capital.

Lydia, I don’t think that’s settled yet. It depends partly on how much further the current mess proceeds, and partly on how many of us make a point of keeping the memory alive.

Denis, yes, that’s an important part of it. Another part is that people in the comfortable classes assume that if the government does something, they’ll be sheltered and the working classes and the poor will get to bear the pain — that’s been the guiding theme of US policy for decades now, after all.

John, that’s something I’ve been advocating for quite some time now, and it remains an important possibility — if enough people get off their rumps and join a lodge. (Full disclosure — I’m a 32° Freemason, past Noble Grand of an Odd Fellows lodge, and past Worthy Master of a Grange.) I’ve done a number of posts on lodges already; here’s one from a little over a year ago, and if you have a copy of the collected Archdruid Report essays you’ll find more in there. As for which orders are still going, the three I mentioned earlier are active in most parts of the country, but your best bet is to check out what exists in your own area — a lodge doesn’t have to have a national organization to be worth joining.

Jerry, thanks for this.

Michael, true enough! Unfortunately none of the old Zen masters took it the next step and pointed out that university degrees don’t cook the rice, either — burning the diploma doesn’t generate anything like enough heat… 😉

Tom, there are various schemes for improving education. I’d encourage readers who are interested to check out the one you favor, as well as any of the others that interest them.

Christophe, the British Medical Journal — that hotbed of anti-science crackpots! — published an article not long ago pointing out that at this point, it’s wisest to assume that all health research is fraudulent unless proven otherwise. If one of the world’s premier medical journals is saying that, why, I think it’s time to listen.

Stellarwind, thank you for both of these.

Coboarts, the prospects of a mashup of Jorge Luis Borges and Madeleine L’Engle are — well, dizzying.

BeckyZ, yes, I’ve been watching the elevated death rates. It’ll be interesting to see which way the curve goes from here.

MawKernewek, a very good point. Maybe the managerial corporatists were embarrassed to find that the socialists were even better at failing than they were, and decided to up their game in order to compete!

Alan, funny! Stories like that are good reminders of the way that our languages shape our thinking and our experiences.

Mark, just one of the services I offer. 😉

Nemo, yep. David Halberstam’s book The Best and the Brightest is about their total failure in Vietnam — it’s a good object lesson.

Greg, four good points.

Possibly, a society that has has focused so strongly on gaining book-knowledge or I’d call it theory and that has focused so strongly on analyzing things and pulling them apart has to flight into abstraction in order to not become mad.

To give an example, the health subject that shall not be named had led me to investigate a lot about the immune system and how it works and there I found this magnificent image of a T-cell, captured by an electron microscope:

https://upload.wikimedia.org/wikipedia/commons/thumb/8/89/SEM_Lymphocyte.jpg/1024px-SEM_Lymphocyte.jpg (I unfortunately don’t know how to embed images here…)

Now, if we assume that this is this and that kind of cell and that it interacts by certain chemical processes with other cells in the body and that you can learn to manipulate it in the way you want it and so on – that’s one thing. You’re in charge and eventually, in some more or less distant (but ever distant) future, you’re going to have it all figured out and with that certainty, you can feel great, for a while.

But then imagine somebody who approaches from this direction realizes this thing he captured with his SEM is conscious. A conscious, living creature of its own, with it’s own perceptions and it’s own, hidden life. Imagine discovering that every cell in your body is such a living, conscious entity.

They’re talking about the laws of nature as if this was something you could understand. There is possibly no law, but just life. The crawling chaos, if you like. What we call “laws” are clumsy attempts to formulate some observations we have made in a language called mathematics. It answers a few “how?” but not a single “why?” or “what?” Once you really understand this from deep within, you realize that you don’t know anything, that you don’t even know who or what you are, nothing. And you have nothing in store to deal with that experience. I once came along that road and I made the realization. It took me more than a year to pull me out of the pits I fell into and be able to fully participate in “normal” life again.

But despite all its flaws and limitations, I hold science (not Science) in great praise. It has shown me a way to perceive nature in much more depth and detail and has played it’s part in opening the gates to look beyond. Isn’t that image of the T-lymphocyte an absolutely great object for meditation?

It’s a journey beyond words, though I fear most will not take it as remarkable easy as Owen Merril did… For many of them, the road might first lead through the suburbs of hell, before it turns to some better place. But at some point you probably have to leave the land of abstraction.

Greetings,

Nachtgurke

Hi John Michael,

Yes, I encounter this story all of the time. People dismiss my lived experience and so I dismiss their abstractions. It’s probably not an optimal response, but how much energy can I personally chuck at the problem? Dare I mention thirteen years of experience with relying upon renewable energy systems (and you’ve heard me over these long years constantly tinkering and modifying them so they’ll work at least better than they did) versus encountering a strongly held belief system on the subject? It’s utterly bonkers.

And um, err, dirt under fingernails. With a tiny fraction of the population involved in agriculture down here (around 1% I believe) it is little wonder that nobody seems even remotely concerned that imports of phosphate minerals have dried up because of the land of stuffs decision to ban exports. That’s your health concern you’ve been worrying about there, that is. It’s not going to end well. Oh well.

I’m coming around to the idea that we are currently reaching the opposite end of the economic continuum from the Great Depression. It’s an intriguing idea because it kind of fits the facts on the ground. We’ve got the flip side of the economic story, but with the same outcome. Take for example employment. Right now there are plenty of jobs that can’t be filled for all sorts of reasons, and unemployment (like sitting at home doing nothing on orders isn’t exactly that!). But back then, there was mass unemployment and few if any available jobs. Back then there was stuff on the shelves but finding consumers with money was not so easy. Nowadays, people have plenty of money, but the stuff on the shelves is in short supply. I dunno, I’m cogitating upon this story – there’s something in it. And I’m hearing some intriguing stories of backlash with employment issues and mandates. Interesting.

Looks like the weather is finally warming up here – your mention of growing seasons has been part of my life this past few months. And that Tongan volcano might cool the next growing season. Oh well, this growing stuff gets easier with time and experience.

Cheers

Chris

Greetings Mr. Greer,

I’m the main ‘chef’ in our domicile. As is often the case, I’ll parse through several recipes, either cookbooks we own, or via the internet (u-toob demos, online recipes, or what have you..). Then, after soaking up such in my brainsack .. whilst taking stock of the larder as well as of a treasure chest of spices, I chart my immediate epicurean journey, hoping to sail into a good and delicious harbor of sensation. Admittedly, I sometimes hit a reef of non-palatable destruction .. but survive to chance another meal with whatever the home galley can provide..

So, on the one hand I take in what measure I can glean from abstractions, and combine them with experiance plus a couple dashes of serendipity to reach my goal.

Cheers

It isn’t quite the same thing, but you could use the terms “theory” for the intellectual knowledge, and “craft” for the practice. Some people have both, most people these days only have one or the other, and those that master something outside of rarified managerial or academic circles have the craft skills.

Masterful essay, JMG – and I like where this series is going. Keep it coming!

Way back in the previous century when I was pursuing a degree in environmental studies, the extremely eclectic faculty was ideologically split between the ‘planners’ and the ‘deep ecologists’. Being a big fan of Aldo Leopold and Walt Whitman, I simply could not fit in with the ‘planners’ group; nor could I understand them — so boringly predictably rational! Planning seemed to squeeze the soul out of life. And a cursory study of the environmental mess that we had made for ourselves, I figured that we would not be able to ‘plan’ our ways out of this.

I suppose that we cannot live without abstractions, but we should choose our abstractions wisely and keep them to a healthy minimum. We certainly should not live in a house made out of abstractions – which is exactly what our ‘illustrious’ planners seem to have created. A house of cards in my eyes. I trust ‘know-how’ a lot more than ‘book learning’ and I am hoping that my grounding in the former will help me fumble my way through whatever future I may find myself in.

As for the immediate future .. where the unimaginable clueless elite are concerned, both here in the states and around the planet.. well, let my just say that they are as a scow heaped with stinking, putrid offal, stuck in the doldrums, situated right over a series of seamounts, comprised of a seething public anger, that are about ready to blow.. bigtime!

I”t’s raining on Mongo…” Not only do many sci-ri planets have the same weather, but the same beings planet wide. Klingons live on one planet, Feregis on another, Vulcans on yet another. Makes you wonder how they ever lerned to get along with other beings.

Great essay JMG…thanks.