All things considered, this may seem like an odd time to start talking again about the nature, history, and future of enchantment. That was one of the core themes I explored in posts during the first half of the year, granted, and I had much more to say about it when the pressures of a world system coming unglued made it necessary to talk about current events instead.

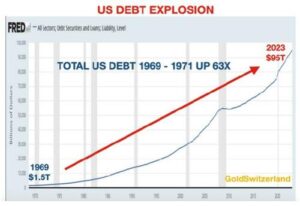

Those pressures haven’t abated at all—quite the contrary. The United States is currently trying to pay the costs and provide the munitions for two wars abroad, at a time when our government is close to US$34 trillion in debt and our defense industry has focused so intently on carrying out devastating raids on the national budget that it can no longer accomplish such subsidiary tasks as manufacturing bullets and bombs in adequate volume. Joe Biden’s approval ratings are dropping so steadily that he may just finish his term as the least popular president in US history, and his party is lurching toward open conflict between pro- and anti-Biden factions, not to mention pro-Israel and pro-Palestinian factions. Meanwhile Trump rises steadily in the polls and his party is drawing up plans for the most sweeping reforms in the US bureaucracy in most of a century.

At the same time, the US economy is stumbling into stagflation, that awkward and theoretically impossible condition where economic activity slows but prices keep rising. (Hint: it happens whenever the price of oil rises due to supply constraints.) Commercial real estate is in freefall and several other economic sectors are in deep trouble, but Biden’s flacks are insisting at the top of their lungs that everything is fine and the economy has never been better. It’ll be intriguing to see what effects that exercise in over-the-top gaslighting turns out to have; if history is anything to go by, the results will not be what Biden’s handlers want.

There’s more than this going on, of course. I could fill an entire post quite easily with signs of crisis from the nations of the modern industrial West, and another post with the evidence that much of the rest of the world is prospering as our decline picks up speed. For the moment, though, I want to set that aside and talk about enchantment. That’s not as pointless as it may seem; just as politics is downstream from culture, culture is downstream from imagination, and imagination is downstream from the states of consciousness that give imagination its context. Those states of consciousness change over time, and the change isn’t necessarily one-way.

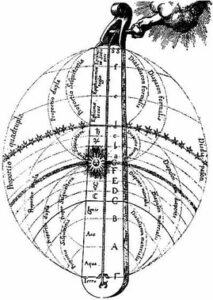

This was the theme of an interesting recent piece by British journalist Mary Harrington in UnHerd. Harrington notes the difference between the modern experience (not merely “concept”) of the cosmos as lumps of matter tumbling pointlessly in the void, and the medieval experience (again, it was never just a concept) of the cosmos as a living whole in which not even the tiniest corner was without life, intelligence, and spirit. She then goes on to point out that the medieval way of seeing the world is much more accessible to us than many people like to think, and ends by suggesting that the flight from purely pragmatic social engineering to the moral crusades of left and right and the increasing influence of religious ideas in public life may herald the reenchantment of everyday life.

To regular readers of this blog, this will not be any kind of surprise. Since the beginning of this year, starting with a review of the implications of Jason Josephson-Storm’s insightful book The Myth of Disenchantment, a series of posts here has talked about what the word “enchantment” means, why so many fashionable thinkers have insisted that it belongs solely to the discarded and devalued past, and why I think it will be among the most essential concepts for making sense of the future immediately ahead of us. The news stories mentioned above, and the broader unraveling of industrial society in which they each play a role, might best be seen as stages in the dissolution of one state of consciousness and the birth pangs of another.

It’s been fashionable since the days of Max Weber to define the modern state of consciousness as “disenchanted.” Central not only to Weber’s core thesis but also to most modern conceptions of history, including the works of Ken Wilber, Owen Barfield, and Jean Gebser we discussed earlier this year, is the belief that modern thinking is uniquely free of mythology and magic, that we see the world in its bare nudity — free of the fancy conceptual clothing that hid its allurements from past ages. It’s a convenient way of justifying the most absurd product of the collective egotism of modern times, the blustering insistence that the people of every past age and every other culture were too stupid to notice that the only reasonable way to think about the world is ours.

However cozy and convenient that belief may be, it’s hopelessly mistaken. It’s long past time to talk about that.

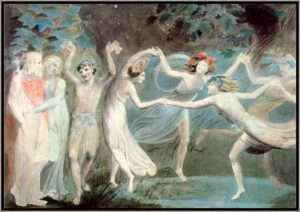

We can begin with one of the standard modern historical notions about the evolution of thought in the Western world. The standard narrative holds that the scientific revolution of the seventeenth century led to the collapse of the enchanted world of the Renaissance and its replacement by the soulless world of modernity. It’s quite a lively little morality play: there’s the whole population of Europe, believing in elves and magic and the rest of it, and then the scientists show up and prove that they’re just plain wrong. The elves exit stage left, hauling the Earth away from the center of the universe as they go, and once a bunch of elderly conservatives read their lines bewailing the loss of beauty and meaning, and the scientists sing a little ditty in praise of Truth and Reason, the curtain falls on a thoroughly modern world.

This isn’t just a bit of pop-culture blather, although you can certainly find it throughout current pop culture. It also has a central role in classic works on the history of ideas such as Alexander Koyré’s From the Closed World to the Open Universe, Sigfried Giedon’s Space, Time, and Architecture, and Rudolf Wittkower’s Architectural Principles in the Age of Humanism, and in far more recent works as well. To a very real extent this narrative is the cornerstone of modern Western industrial culture, the story we use to explain to ourselves where we came from, where we’re going, and why that matters. It’s also a drastic falsification of what actually happened.

What actually happened is clear if you look at the timeline: the abandonment of Renaissance ideas of universal order, meaning, and value happened before the first stirrings of modern science, not after it. By the mid-sixteenth century, the same currents of thought that drove the Protestant Reformation were already shredding the Renaissance synthesis and rejecting the old enchanted world. Much of what led Protestant reformers to break with Rome, in fact, was precisely Catholicism’s reliance on religious enchantment: the precisely scripted rituals and sacred objects that, to the enchanted mind, possessed a link to the spiritual realm, was what the disenchanted minds of the Reformation could not accept.

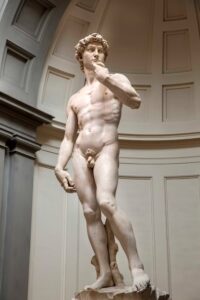

You can see the same process at work in the shift from Renaissance to Baroque art. Consider two statues of David, one by Michelangelo, the other by Bernini. Michelangelo’s sculpture is one of the supreme works of Renaissance art; it is at rest, like the stationary Earth of the old cosmology, occupying a central place in the viewer’s cosmos, oriented toward nothing outside itself. It is also proportioned according to the sacred geometries of Renaissance tradition. Bernini’s statue is one of the great works of Baroque statuary, and it differs in exactly the way the world of the early modern West differed from that of the Renaissance: it is in motion, oriented toward the far distance, and its proportions aren’t based on any sort of sacred canon; they were chosen by Bernini purely on the basis of what pleased him.

The arts are remarkably useful here as a way of checking the traditional narrative. Histories of science love to talk, for example, about how Johannes Kepler discarded millennia of tradition by postulating that the planets move around the sun in ellipses rather than circles. They don’t generally mention that ellipses had become fashionable in architecture more than half a century before Kepler picked them up, and churches and plazas with elliptical patterns were springing up all over Europe by the time his writings were in vogue. It’s not that Kepler followed the evidence and stumbled across the ellipse; it’s that ellipses were fashionable, and Kepler figured out how to apply them to astronomy.

Now of course the facts that Kepler got his basic model from the pop culture of his time, and the sciences more generally followed the lead of early modern art and popular culture rather than blazing the trail the currently fashionable narrative assigns them, aren’t enough by themselves to disprove those narratives. Doubtless true believers in modern science could claim that the vagaries of intellectual and artistic fashion that made ellipses, infinite space, bodies in motion, and the rest of it popular in the cultural sphere just happened to spawn a set of concepts uniquely suited to make sense of the natural world.

Yet there’s another difficulty here, one that philosophers have been quietly discussing for some decades now. You may be aware, dear reader, that scientific popularizers like Neil DeGrasse Tyson hate philosophy. The issue we’re about to discuss is a core reason why. It goes by the name of underdetermination. It’s worth taking some time to understand what this means, because our world is already being shaped by the consequences of the underdetermination of scientific ideas and that process is still in its early stages.

Like most of the serious questions that keep philosophers busy, this one can be described quite easily. Let’s say we have a set of observations about nature that we’re trying to understand, and we come up with a hypothesis that attempts to explain them. We draw some logical conclusions from that hypothesis, and come up with an experimental test that can go one of two ways: one way that supports the hypothesis, the other way that doesn’t. We run the test, and the result is the one that supports the hypothesis. We repeat this process with several other experimental tests, and each of the results supports the hypothesis. Does this prove that the hypothesis is true?

No, it does not. It simply shows that the hypothesis successfully predicts the outcome of the specific tests we ran. That’s important, and it’s well worth knowing, but it doesn’t prove that the hypothesis is true—only that it’s useful.

It gets worse. Given any set of observations, it is possible to come up with an infinite number of hypotheses that will account for them. It’s impossible to think of all those hypotheses and figure out ways to test each of them against the data, for the same reason that if you try to count from one to infinity in any finite period of time, you’ll fail. Thus you can be sure, even if you spend the rest of your life running tests, that there are still an infinite number of hypotheses out there that fit all the data you’ve gathered just as well as the hypothesis you want to prove.

Now of course the obvious response of scientists to this argument is to demand that philosophers come up with one—just one!—hypothesis that explains the evidence gathered by some modern science as well as the accepted theories do. The philosophers’ proper answer is, “Sure—just give us a few hundred well-trained grad students and a couple of decades.” Current scientific theories look as impressive as they do, and succeed as well as they do in predicting the behavior of things in the world, because armies of scientists have beavered away for a couple of centuries tinkering with their theories to make them fit the observed behavior of nature as closely as possible. That’s what scientists do, and they’re good at it. The models they’ve created do an excellent job of predicting the behavior of many things in nature—but again, that doesn’t make those models true. It just makes them useful.

The history of science is among other things a potent antidote to the claim that today’s accepted theories have some permanent claim on truth. No theory of nature has ever looked as imposing and convincing as late nineteenth century physics. The physics of that time provided fantastically accurate predictions of nearly all of the phenomena in nature. Sure, nobody had figured out how the sun could keep producing light and heat long enough to fit the geological evidence for life on earth; the planet Mercury had a wobble nobody could explain, and attempts to explain it by postulating another planet named Vulcan hadn’t succeeded very well; and heat and light radiating from a black body behaved in ways that really didn’t seem to make any sense at all—but all those were tiny little details that would surely be solved with a little more work.

By 1910, due to those tiny little details, the entire structure of late nineteenth century physics was in ruins. All those carefully developed theories had to be scrapped because the tiny details turned into vast gaping chasms that ran straight through the middle of physics. Worse, the two theories that more or less accounted for them—quantum theory and the theory of relativity—contradict each other in important ways. That’s why physicists ever since have been trying to come up with so-called Grand Unification Theories to bridge the gap. They’ve failed so far, and there’s no particular reason to believe that a second century of effort will bring them any more success.

The collapse of certainty that flattened the soaring edifice of late nineteenth century physics isn’t unique in the history of science. It isn’t even unusual. As Thomas Kuhn showed more than half a century ago in his book The Structure of Scientific Revolutions, this is the normal rhythm of scientific discovery and explanation. It happens for a reason I discussed in an earlier post: scientific facts are social constructs.

Every step along the way from the first inkling of a hypothesis to the published textbooks that enshrine (or entomb) the work of past research is shaped, often to an overwhelming degree, by social interactions among scientists, between scientists and the institutions that pay them and finance their investigations, and between the scientific community and the larger society. Nature gets a vote in there by way of experiment—that’s what makes science more practically useful than many of the other ways our reality is socially constructed—but scientific reality is still a social product. Why does one hypothesis get turned into the centerpiece of a theory while others get discarded? By and large, it’s a matter of social pressures within the scientific community.

Glance back over the history of science, too, and you’ll notice something else that doesn’t get much comment: the influence of social factors over scientific inquiry has increased over time. Consider the phenomenon of peer review. Back in the nineteenth century, that didn’t exist. A scientist who completed a series of experiments published a paper about them, other scientists read the paper and did their own experiments to support or disprove the claim made in that first paper, and the whole thing would be hashed out in the letters columns of journals, sometimes for years, until it was clear who was right. That sort of free-for-all made for excellent science, and may explain why the rate of technological advancement was higher by most measures in the late nineteenth century than it is today.

Today? If you submit a paper to a scientific journal, it goes out for peer review, meaning that the journal sends it around to three or four acknowledged experts in the field and won’t publish it unless they give it a thumbs up. The politics around who gets to be peer reviewers in each subset of each field of science are intense and often bitter, and quite often a paper that offers evidence disproving the conventional wisdom in some field of science will be denied publication no matter how good the research is, because the peer reviewers are committed to the defense of the status quo. They may have good financial reasons for that: in an era when most funding for scientific research comes from corporate sources or from government bureaucracies “influenced” (we can use the polite word) by corporate money, who gets funding and who doesn’t has much more to do with quarterly profits than it does with good science.

Over time, in other words, the models of the cosmos upheld by scientific institutions, by the media, and by schools and universities as the truth about nature have become more and more influenced by social factors. Meanwhile, while scientists are encouraged to criticize the work of other scientists, people outside the scientific community who raise awkward questions about the validity and accuracy of this or that model are shouted down by the propagandists of science, and by all those figures in authority who benefit from claiming that their ideas shouldn’t be questioned. In effect, scientists have become the priesthood of the modern industrial world, handing down claims about the cosmos that no one else is supposed to question, and (as priesthoods always are) being manipulated by existing power structures to defend the status quo.

This same insight can be summed up neatly in another way. The claim that the coming of science meant the end of enchantment is completely mistaken, because science is itself an enchantment. The world inhabited by true believers in science is an enchanted world, a world where certain people in white lab coats have a unique ability to know the truth about nature, and the rest of us are supposed to accept whatever they say on blind faith, no matter how often the approved dogma changes and no matter how much harm it causes. There was no disenchantment of the world; instead, we exchanged an old enchantment for a newer one. Granted, the new enchantment had its advantages, but it also has had tremendous costs, and the bills are still coming due.

Yet there’s another factor in play, because the enchantment of science is breaking down around us right now. We’ll talk about how that’s happening, and what that means, in future posts.

Great stuff as always, sir!

It amuses me to no end the reaction(s) I get when pointing out to enthusiasts of the Simulation hypotheses that their idea reinstates God as the programmer.

It did not last: the devil, shouting “Ho. Let Einstein be,” restored the status quo.

It is only by interfacing with your work, Mr. Greer, that I have come to enjoy the application of seeing one set of modern circumstances as an extension, or another version, of something as ancient as our species.

I work in industrial electricity, power distribution, etc. and if science is our version of an enchantment, then my industry is an occult guild system – an equivalent to the free masons, the golden dawn, etc.

We are hired as apprentices, become initiates in the rites, with lodges for various critical tasks – generation, transmission, system protection, distribution to customers, cathodic protection, etc. We have our secret rituals that are handed down from master to apprentice, with ancient texts (i.e. “Theory and calculation of alternating current” by Steinmetz) and hidden masters (Nikolai Tesla); regular guild meetngs develop the rites (ASTM and IEEE standards and test procedures).

Viewed in this way, my anxiety at work has dropped considerably – I am but a lowly initiate working in one of the grand lodges sub-groups. We pass on our secrets and magical numbers (square root of 3 is a big one) by meditation (“I am on my break”) and tests of mettle (“Fix this”) and knowledge (certification tests and civil service exams).

Back in the 80s when I was a struggling young father with barely a high school diploma I got a job selling and trouble shooting chemical dispenser systems for hospitals, care homes, research labs, and restaurants. Some marketing puke decided we should wear lab coats. I was instantly moved from being an invisible prole to being treated as Someone You Can Trust.

Doors opened. Strangers were differential. Women who before wouldn’t give you the time of day were giving me an appraising look.

The Enchantment…..

That Kepler’s observations of the heavens followed from a study of geometry will not be a surprise to anyone who has studied the liberating arts and sciences; nor will it cause a Freemason pause, such concepts being inculcated early in their journey.

Finally a stripping of the altars a conservative can relish. It has been in many ways the most truculent, hubristic, and repressive priesthood in history, since perhaps the days of ritual human sacrifice. And I’m not sure they don’t even do some of that. At least indirectly. Who isn’t tired of Science as a religion, even it’s high priests show signs of PTSD? Are they even doing Science? Nice summary, excited to continue this vein…my son was helped a lot a few months ago when I explained that our modern mythology that we have no mythology IS our mythology. That made sense to him and a penny dropped. Largely because of your work could I see that clearly enough to convey it at the right time

A pretty obvious observation is that this modern priesthood of scientists will likely end up being severely discredited once it is realized in hindsight just how much harm the Covid mRNA “vaccines” caused.

Last year I couldn’t find my glasses for 4 months. Either Saint Anthony was so swamped with requests he hadn’t got to mine yet, or even he couldn’t find them. One day I was kneeling on my bed with my feet hanging over the edge.. Something hit my heel in such a way it could only have fallen from the ceiling. Turned out to be my glasses. Now I wonder if this was St. Anthony having a little fun with the return of enchantment, or for that matter if the gremlins finally dropped my glasses while playing with them. Or if St. Anthony said sternly, “Return those immediately before she starts out driving to [Puppyville, the next town over] and ends up in Fiji!” and the gremlins petulantly flung down the glasses. Either way, I can’t find a “rational “ explanation for lost glasses falling from the ceiling

Also on things that aren’t “rational,” I’d like to thank St. Expedite for his quick help.

“a paper that offers evidence disproving the conventional wisdom in some field of science will be denied publication no matter how good the research is, because the peer reviewers are committed to the defense of the status quo.”

I’ve seen that one myself, and not just from corporations, but from politics. Back in the late ’90s applications for research grants had to include a discussion of the relevance of the proposed subject of study to the problem of global warming (as climate change was known at the time) even if the project had nothing to to do with climate. Unless you genuflect to “the narrative” no money for you! That is largely why I went back into industry. That and I like standing in the middle of operating large scale machinery.

A few years later I started looking at getting my Professional Engineering license. I found the same thing, “List the projects you have been a part of, and how each of them affected Global Warming.” Not “What actions did you take to make sure your pressure vessel did not launch itself into low earth orbit.” I dropped the notion to get a PE license partly for that, and partly for other reasons.

Scientists are now speculating that the universe might not actually be expanding:

https://iai.tv/articles/evidence-the-universe-might-not-be-expanding-auid-2551

I’ve been reading that the placebo effect is getting stronger and stronger. I’ve also read that if a doctor is fooled and thinks that a drug is real and give it to the patient it works better. Where does the primary (and even 2nd hand) placebo fit into enchantment and can we expect placebos to work even better in the future?

I listened to that podcast you did from 2017 with John Crowley this morning. I was glad to have been able to listen in on that conversation, and glad I didn’t miss it again when it was brought up this past week. This discussion of storytelling was great, but I enjoyed the whole thing.

I need to finish Storm’s book… I left off at the beginning of part 2. In the first part, I really enjoyed reading all of it, but really liked how he showed Crowley’s creative use for enchantment, of James Frazer’s take on disenchantment.

Either way, I’m glad you are back on this series, though yes, those diversions to current events were necessary.

Science can certainly be read as enchantment. Kepler, Bacon, Goethe, Novalis, oh my!

Musical selection of the week: Laurie Spiegel, Kepler’s Harmony of the Worlds, composed and realized at Bell Telephone Laboratories.

https://lauriespiegel.bandcamp.com/track/keplers-harmony-of-the-worlds

Here is a bit I wrote about this:

Like many musicians before her Laurie had been fascinated by the Pythagorean dream of a music of the spheres. When she set about to realize Kepler’s 17th century speculative composition, she had no idea her music would actually be traveling through the spheres. Kepler’s Harmonices Mundi was based on the varying speeds of orbit of the planets around the sun. He wanted to be able to hear “the celestial music that only God could hear” as Spiegel said.

“Kepler had written down his instructions but it had not been possible to actually turn it into sound at that time. But now we had the technology. So I programmed the astronomical data into the computer, told it how to play it, and it just ran.”

Moore on that here: http://www.sothismedias.com/home/the-bell-sound-3-grooving-with-laurie-spiegel

Dear JMG and commentariat,

Just a little note on circles and ellipses. I am not a mathematician, but I love the discoveries of mathematics and its humility (it always restricts the areas in which its calculations can yield results); there are non-Euclidian spaces, which enable to define shapes to all appearances more similar to circles as triangles. Similar (complicated) equations could, to my theoretical knowledge, be used to transfer/calculate/redefine ellipses into circles (and back). Therefore, it is not “truer” to calculate planets’ orbits as elliptical rather than circular, it is just far, far easier (more practical).

Thank you for the text!

Have a nice day.

With regards,

Markéta

My prediction for the year 2024 is, that the FED will present the US debt statistic in a logarithmic scale.

JMG:

I am beginning to think that you and Aurelian have zoom meetings every week to discuss how you are going to jointly approach subjects from subtly different perspectives.

But bravo on this piece, excellent work.

To my fellow readers/commentators, head over to

https://aurelien2022.substack.com/p/arming-ourselves-against-the-future

I

There are a bunch of reasons I believe scientists and doctors will be in real trouble as eras and sentiments shift. I have argued that medicine is currently in a Dark Age despite the publicly-promoted view that medicine is in a state of haute advancement. People seem to be dying in waves of Covid vaccine-caused injury in my area, which is about 90% vaccinated. From what I can tell, the vaccine shortens the lifespan of the elderly by 5-10 years. It is causing miscarriages and problems with pregnancy. It is cutting down a lot of young people with nerve disease, turbo-cancers, and heart attack. The kids who got it are sickly — the seasonal flu turns into pneumonia instead of just going away. It is my opinion that having scientists and doctors in one’s lineage will be a source of shame in a couple hundred years. This isn’t just because of the Covid vaccines of course; those vaccines were merely the cherry on top of the cake.

Fantastic essay this week !

Well hell, what should we trust in now? “Trust the numbers” has been one of my favorite mantras when following the science of whatever for a while. I love your posts and always eagerly look forward to the next, but they always leave me a little (or a lot) disoriented!!

This version of science here has some qualities of a malign enchantment (as you said the bills are still coming due)… but some other modes of science, may be less priestly, and have other enchantments about them. The kind of stuff done by Phillip Callahan comes to mind. I hope there will be room for more along those lines as the priesthood loses some of its clout.

Donkey32, ha! I like that. Yeah, I bet that gets colorful reactions.

Old Steve, that’s just it. Relativity theory explains the behavior of some things in physics, quantum mechanics explains the behavior of other things, and the two theories contradict each other right down to the core. The devil settled into his usual residence in the details, and has been rubbing his hands and cackling as he watches scientists frantically try to reconcile two irreconcilable models, all the while pretending to the rest of us that everything was just fine.

IBEW, delighted to hear it. Does your hall still use the old ritual for its meetings? As late as the 1970s, I’ve read, most IBEW halls still did so, and the ritual — I have a bootleg copy — is absolutely standard fraternal lodge stuff. Here’s an image of the pages giving floor work for the hall opening and the initiation ritual:

Longsword, excellent! I’ve used that myself — Joseph Max and I once did a radionics demonstration at a Pagan convention wearing white lab coats, the ritual garb of science. It really did add something.

Harry, granted, but in this case it’s a little more than that. Kepler wasn’t just influenced by geometry — although he certainly was; he was also influenced by current fashions in architecture and popular culture.

Celadon, excellent! Your son is now much better equipped to deal with the world.

Mister N, we’ll be talking about that as this sequence of posts continues.

Your Kittenship, that’s a classic case of apportation. Back in the day people assumed that fairies did it; no doubt some kind of fancy way to avoid talking about conscious beings will be invented if the phenomenon ever becomes so common that it can no longer be denied.

Siliconguy, oh, good gods, yes. The intrusion of politics into science and engineering has become really blatant of late — with predictable negative effects on the quality of science and engineering.

Kurt, about time! The entire expanding-universe business was so obviously a projection of Faustian notions into the cosmos that I’m surprised anybody took it seriously.

Bradley, that’s a complicated subject and one that will require a lot of discussion down the road a bit. The short form is that the placebo effect is one of the standard modalities of magic, and the fact that it works when it’s the doctor who believes in the drug shows that magic works.

Justin, glad you liked it! I got the graphic of the celestial monochord from your blog, btw. 😉

Markéta, that’s a good point. Back in the day, astronomers did very good planetary math by assuming that the planets moved in a circle whose center rotated around another circle; there are doubtless an infinite number of other ways to do the thing, too.

George, that seems embarrassingly likely.

Degringolade, I know he reads my blog, and I certainly read his, so you’re not entirely wrong…

Kimberly, I don’t think it’s going to take a couple of hundred years. If things continue as they’re going, there could be violent blowback within this decade. I hope not, but that’s what it’s looking like.

Ken, thank you.

Joshua, numbers are just another human language — a very useful language, and one that can say some very important things, but they’re still just a set of abstractions in human minds. You might as well say “trust the English language!” The terrible and wonderful reality of human existence is that we have no direct access to truth — all we have are parables, metaphors, symbols, and stories.

Justin, that’s a major hope of mine, and one I’m working on.

Yes ! A return to the Enchantment series. I really enjoyed the first sequence, and if more pressing issues make you put it aside again in the near future (very well might), that’s OK, as long as it’s not indefinitely shelved 🙂

Sidenote : Recently you mentioned some readers from wayback who were asking “whatever happened to our JMG of old ?” Well it so happens that since I discovered Ecosophia (Aug 22), I’ve read it and then jumped to The Archdruid Report (currently Jan 2013, I go backwards) and I can say I don’t agree with ’em. To each his/her own, but I find your current work much more potent.

Thank you for everything, and please do keep up, onwards and upwards, just like our civiliza… Wait.

I think you are right, but I do wonder about the hidden positive benefits of the enchantment of science, specifically when combined with Protestant religion. It certainly seems to have neatly killed off experimentation in fields of knowledge that would have been utterly disastrous to us as a species, far more so than the not so great effects of environmental pollution and heating up the planet. If so, this cycle of enchantment, for all its frustrating effects on those of us that think differently, may have had an intended effect.

Yes, yes, yes! Just like Marxism has been a religion in its own right to replace the traditional religion, so too, Scientism has been the new enchantment to replace the traditional enchantment. To my mind, both Marxism and Scientism are kind of like fake meat: over-processed and of questionable nutritive value. Humans cannot live without enchantment: it’s just that some enchantments are better for the soul (individually) and society (collectively) than others. I’ll take the traditional enchantment over the modern one any day!

Speaking of enchantments, yesterday I came across a surprisingly insightful column in a major Canadian newspaper (of course, it was a tabloid which only ‘peasants’ read) which stated that given Prime Minister Turd’s obsession with Global Boiling and his determination to force 90% of the public and industry into penury through ever-increasing carbon taxes, the man is clearly a fanatical adherent to the Church of Climatology. I had a good giggle over that. His eyes certainly have that abstracted glaze of a true zealot.

“…the enchantment of science is breaking down around us right now. We’ll talk about how that’s happening”. I can say it in two words: honk, honk! 😊 (reference to the truckers convoys against the mendacious mandates) That, at least, seems to be part of the process: the ‘grubby peasants’ of Monty Python’s Holy Grail (truckers and other blue-collar workers who have their feet firmly planted in the reality they see around them) called ‘bovine excrement’ on the pronouncements of the ‘hoity toity’ supreme authorities in the medical establishment (in their ivory tower – or is it glass house?) and their enabling governments, and proved its falsehood by gathering in the tens of thousands in close contact with each other, including hugs galore, for weeks on end without the slightest increase in cases of the dreaded ‘cooties plague’ in Ottawa back in the winter of ‘22. Nothing like a good 114 dB honk – or a thousand – to help break an enchantment!

Haha, excellent post JMG! “Trust the science” took quite a hit with the “vaccine” debacle..As one trained in science at (famous) University who took a very different path, I get a kick out of scientific scams like the ridiculous claim that they had found a room temperature superconductor, or that Musk was going to take people to Mars by 2025….I also enjoy annoying my friends with astrology, which has proven quite useful despite its lack of peer reviews….,Kudos, though, to Einstein who couldn’t believe in “spooky action at a distance”, but suggested an experiment that proved it true through entanglement…Whatever his faults, Einstein was a true scientist…

The antidote I’ve always used to belief in the current claims of “Science”– in particular, its models of the universe– is simply to imagine the same science performed continuously over 100 years, 500 years, 1,000 years, 5,000, 10,000, 50,000 100,000 years, and so on. Are we to suppose that the scientists of the year 200,2024 have the same model of the cosmos that ours do, or even one slightly resembling it? I then imagine the same sciences being performed by beings more intelligent than humans– say, with an average IQ of 200. Would they come up with the same models that we have? What about beings with an IQ of 500? 1000? What about beings whose reasoning capacities are to ours as ours are to single-celled organisms? And what about the beings who are to those beings as those beings are to us? These chains can be extended indefinitely, even if there are no such beings in the cosmos, or in any imaginable cosmos– the knowledge is still exists in potential. Suppose beings who are to the beings who are to the beings who are to the beings who are to us as we are to paramecea have been investigating the nature of reality for one million years. What does their universe look like? Do we really think it has even the slightest resemblance to the universe of Neil DeGrasse Tyson? This would make poor Mr. Tyson among the most knowledgeable beings possible, in any possible universe, and that would make our era of history the most privileged imaginable. So much for the Principle of Mediocrity! Can anyone believe such a thing?

I have also been reflecting on when science may have gone wrong. My take is that the 19th century experimentalists certainly retained an awe and respect for Nature, and they didn’t wish to see it lying bleeding and cut up into little bits on the ground. For instance, Michael Faraday apparently talked about a thing called the “electrotonic state”, which underlies all electrical phenomenon. More than a century later, Richard Feynman said he wished he’d understood physics naturally as potentials, instead of fields or forces.

Those of course are all variations of aether theories, which still sits there quietly in quantum physics, called the vacuum, and people try to forget about it.

I’ve been trying to trace where it went awry, and I put it down to Heaviside’s reformulation of Maxwell’s original equations. Maxwell’s equations – those things that underpin the modern world – were in quaternions, a mathematical formulation that does not close off other worlds, however, they are fiendishly difficult for most to interpret. So Heaviside, a staunch materialist, simplified the difficult parts of Maxwell’s work, and replaced the more exotic concepts like pure magnetic fields with mathematical abstractions that could easily, and were ignored. Doing so created the modern world, but it seems to have been a bargain, because after that, experiments that were shown to be inconsistent with Heaviside’s reformulation were ruled to be wrong. And still are, outside of the quantum world, that seems to allow for some madness over very short distances and timespans.

Some people have been trying to rework the equations to provide for more, there’s an outstanding paper below. I checked its citations though – only 2. Still forbidden 🙂

https://iopscience.iop.org/article/10.1088/1742-6596/1251/1/012043/pdf

Your Cute Kittenship,

(I hope this is a proper way of greeting, if not, excuse me, please, I am not a native English speaker:o))

There is a perfectly scientific explanation of the glasses disappearing; little black hole formed next to them and sucked them in. Now, the timely return might be explained as the little black hole becoming unstable and changing into a wormhole…which returned your glasses and crawled away.

Now, if we suppose this is what really happened, the only remaining question is, why on Earth would St. Anthony read our scientific papers?

Have a nice day! :o)

Markéta

>That and I like standing in the middle of operating large scale machinery

You don’t need a PhD for that, just work in construction.

>The models they’ve created do an excellent job of predicting the behavior of many things in nature—but again, that doesn’t make those models true. It just makes them useful.

Although give them credit for getting as far as they have. It’s like trying to figure out how a car works without ever getting to look under the hood, but instead crashing cars at each other and looking at the debris left behind. In fact I wonder if we don’t really have a theory of the nucleus, so much as a nuclear crash theory, what kind of bits and bobs you’ll see from a crash. A piston rod is a piston rod, no matter what but I wonder if they don’t quite fully understand the role it plays in the engine. If we could only look under the hood…

My criticism of science in general is even when parapsychic phenomena is reproducible, they still refuse to study it. That, IMHO, is inexcusable.

The whole idea of the western experiment is an interesting one, because underneath it is the inherent assumption that something that occurs in a unique point of time under a unique set of conditions is then applicable to all times and all conditions. As you say it doesn’t mean anything is true, all you can take is a useful technical assumption forward.

This is quite hilarious when you step outside the Faustian bubble, because the same thing applies to western jurisprudence. A precedent set by a judge under one set of circumstances is then applicable down the ages under similar set of circumstances. It’s all Faustian will to duration, and something the Greeks and Roman’s certainly opposed. Classical jurisprudence is such a refreshing change in its utter pragmatism for the individual case before it.

@Joshua (#18)

If we just follow the money, the Almighty Greenback itself can give the ultimate word on who/what to trust:

‘In God we trust’ [*AHEM* …all others please pay cash]

Following on from my first comment about trusting the numbers, F. Scott Fitzgerald said you can either place your trust on “the hard rock or the marshy shore.” It’s an apt metaphor for this week’s post. If we can no longer believe in the hard rock of science (which turns out to be just another enchantment) what should we then put our trust in?

Thibault, glad to hear it. In place of “onward and upward,” I offer an alternative from a long-ago Archdruid Report post:

“Who is the hero or the heroine who will turn the pages of the long-lost Gaianomicon, use its forgotten lore to forge a wand of power out of the rays of the Sun, shatter the deceptive spells of the lords of High Finance, and rise up amidst the wreckage of a dying empire to become one of the seedbearers of an age that is not yet born? Why, you are, of course.”

Peter, oh, I don’t think it was a mistake at all. For any number of reasons, the particular enchantment we’ve been under was appropriate for its time. It’s just that its time is over.

Ron, what you’re saying, then, is that science is spam-chantment. Gotcha. 😉

Pyrrhus, if Einstein were a young scientist today there’s no way he would get published in the journals. The spam-chantment protects itself against genuine scientists!

Steve, that’s an excellent point!

Peter, that’s an interesting question. I don’t think there’s a point when it “went wrong” — no matter what, any set of human models was doomed to be too simple to make sense of the universe, for the simple reason that human beings simply aren’t that smart.

PumpkinScone, exactly! The Faustian will to infinite extension is also a will to power, and that shows up embarrassingly in both these cases. Notice that our jurisprudence doesn’t accept Roman precedents…

Joshua, the problem is that science isn’t actually a hard rock. It’s a marshy shore with a bunch of people in white lab coats standing on it, up to their knees in mud, loudly saying “My feet aren’t a bit wet!” There is no hard rock — that’s the wonder and the terror of human existence, and it’s also the meaning of that much-abused word “freedom.”

It struck me from the first that Dr. Mabuse’s, er, Tony Fauci’s holy mantras, dutifully repeated by the regime media, of “I am the science! Trust the science!” were little more that pathetic attempts at enchantment. I myself did not find them particularly enchanting, however.

I am surprised no one has mentioned that blatant superstition of Scientism: the Occam’s Razor.

As an engineering principle (and early scientists had to be a little bit of tinkering spirit in them, there was no industrial ecosystem to provide the trinkets they needed for their experiments, after all) the Occam’s Razor is as sound as it is pragmatic. Every moving part you add to a new design is another component that will require ongoing maintenance in the best case, and more likely than not another source of potential failure as well. In my current profession, it is said that it is twice as hard to debug a computer program as it is to write it in the first place; so you must write it in such a style that you’d imagine a halfwit to have done, in order to be able to contend with it at the full of your brain power when the time comes to fix it.

But if you are in the business of describing the external world AS IS, the Occam’s Razor is pure hubris. Not only these realities must be cognizable by the human brain, but they surely must be easy to grasp as well. More over, if scientific knowledge compounds over time (because you will use scientific doctrines as axioms in the construction of your hypothesis), the constant application of this principle by generations of scientist turns into a one way ticket to Dumb-down-ville; a Tower of Babble, so to speak.

Now, if we could repurpose the scientific method (with lowercase ‘s’) as a way to build “astral machines” that help us give rhyme and reason to the torrent of sensory inputs Reality presents us with …

I think I have posted these quotes before, but they are very fitting for today’s post so I’ll take the risk to repeat myself:

“… it seems probable that most of the grand underlying principles have been firmly established and that further advances are to be sought chiefly in the rigorous application of these principles to all the phenomena which come under our notice.” (A.A. Michelson in 1894, Nobel prize for physics in 1907).

“To upset the conclusion that all crows are black, there is no need to seek demonstration that no crow is black; it is sufficient to produce one white crow; a single one is sufficient.” (William James)

“I’m still confused, but on a higher level.” (Enrico Fermi)

These three quotes I use to present to all of my older students at some fitting moment during their physics classes. You can’t mimic a white crow moment, of course, but it helps to have heard that they exist. Likewise it is good to know that even the most eminent scientists were prone to make complete fools out of themselves and lastly it’s not bad to have a simple working maxim that might help to prevent to make a fool out of yourself and stay curious instead.

Well, there’s a saying that you sometimes hear in the German alternative medicine, spiritual healing scene: “Wer heilt, hat recht.” – “Who heals is right.” It’s interesting to notice, how quickly you shut the doors again once you have escaped one chamber of ignorance to the next. I find it to be understandable, though, for to endure the pain of the fire of not knowing can be a burden hard to bear.

Lastly, for all who might be interested in this, I’d like to advert the exchange of letters between Pauli and Jung which in an English translation can for example be found here: https://pubs.aip.org/physicstoday/article/55/9/62/757315/Atom-and-Archetype-The-Pauli-Jung-Letters-1932

Judging from what I have read so far, Pauli was, by way of exploring his unconscious mind with the help of Jung and his students, an enchanted scientist in the way that he could see enchantment of nature. Others might have been as well, as the occasional quote from Pauli’s or Jung’s meetings with Bohr, Einstein, etc. suggests. Beyond that you will find Fludd, Kepler, Kant, Schopenhauer, UFOs, synchronicity, alchemy, divination, astrology, dream work and sophisticated hypotheses on how the (collective) unconscious and the world of matter might have common roots in the book. Truly a wild ride.

Cheers,

Nachtgurke

>There’s more than this going on, of course. I could fill an entire post quite easily with signs of crisis from the nations of the modern industrial West, and another post with the evidence that much of the rest of the world is prospering as our decline picks up speed.

And this is the song I listen to while reading those paragraphs.

https://yewtu.be/watch?v=7Sw9Fh6uk4Q

The book Art & Physics by Leonard Shlain is a breezy survey developing the thesis that ideas that first stir in the minds of artists often soon wind their way into the theories of natural philosophers and scientists. A casual beach read for folks who enjoy thinking about these sorts of connections.

Thanks, JMG. Now I am going to have the Monty Python Spam skit going through my mind all night long… 🙂

Great post. I know personally what it feels like to move away from a cold, materialistic view of the cosmos. As I’ve said before on this blog, I used to be a staunch materialist, but after doing a lot of introspection and reading philosophy, I had to reject materialism. Now I view the universe as a part of the Absolute, pure infinite consciousness. The entire cosmos is full of life and spirit. It IS life and spirit. This paradigm shift was hard to pass through, but it absolutely changed my life.

I remember when I first learned of the idea that science is socially constructed like any human effort and that it doesn’t give us a real objective, third-person view of the world. It was a few years ago in a philosophy course when I was still a materialist. Back then I bristled at the idea, but now I have come to terms with it. It doesn’t make science useless, but it means we have to practice more humility and understand that science is deeply interwoven with cultural biases, class relations, and societal values. It has fundamental limits, like every other way of human knowledge.

Science is a toolkit that is used to create models that describe what we observe, nothing more. As you said, the models are useful, but not necessarily true. Science describes how reality seems to behave, but it doesn’t uncover the fundamental nature of reality. When we forget that our models are convenient fictions and not the real reality, we replace the territory with the map and end up with materialism.

I was reading a book by Jaques Vallee. In one part he made an argument that science is not equipped to properly investigate the UFO phenomena, because science has been most successful with parts of the universe that behave mechanistically, without intelligence. Think of the ‘hard sciences,’ like chemistry, geology, meteorology, physics. Science has a harder time with things that behave intelligently and reactively, think of the ‘soft sciences’ like psychology, sociology, history, economics, etc. Science would have an extremely hard time investigating something that is intelligent and doesn’t want to be found. Just to be clear, I don’t believe the UFOs are spaceships from another planet, I think they’re the manifestation of some kind of anomalous intelligence that has always been here.

Sorry for the long comment, you gave me a lot to think about.

Do apportations normally take 4 months, less time, or more time? How do they happen?

—Princess Cutekitten

The corruption of “science” is well recognized by those who might be considered “truth seekers”, like doctors trying to do right by patients, engineers who want stable long-term structures, and others on the front line of practical application. For a few decades, plentiful articles in medical journals have documented the bias of industry-funded articles, and highlight the “replication crisis” of the ?majority of publications, that fail attempts at confirmation. This is so well established that essentially all medical practitioners are aware of the problem. Similar issues are seen in many other fields, including nutrition, agriculture and public health.

To some, perhaps idealists, science is a method of inquiry, an attempt to learn while limiting bias. Open access journal articles, mostly NOT peer reviewed, have developed in response. They are like the wild west of science – some are legit, some frankly falsified, some funded, biased and scripted by the powerful. Good luck figuring out what is what, though there is some helpful raw data out there for perusal, where time for thoughtful study and expertise in questioning methods and conclusions, helps understanding. Humility may not count for much in the opinion of the powerful, but certainly applies in practical applications.

Bradley in comment #11 notes that the placebo effect is getting stronger. I was interested by this, and looked it up. It does indeed seem to be a factor – but they’re focused mainly on pain medication. It’s not clear from a quick websearch whether the placebo effect has changed for things like anti-nausea medication, anti-cancer and the like. Just pain.

https://www.sciencealert.com/the-placebo-effect-is-somehow-getting-even-better-at-fooling-patients-study-finds

In my work as a trainer, and working with people with past injuries or chronic illnesses, I’ve had many occasions to encounter this. The interesting thing about pain is the large psychological component. Doctors will tell you that if they have a patient with anxiety or depression, even a needle injection causes them great pain. But confident people with good family connections can have horrible injuries and relatively little pain.

With that in mind, we can ask: what is the psychological state of the people being studied? And this ties in with things like an increase in self-harming behavious among younger people across the world – which this authour quite plausibly ties to smartphone usage.

https://jonathanhaidt.substack.com/p/13-explanations-mental-health-crisis

Now thinking further, is the increase in placebo effect against pain stronger in people who have more mental health issues? Well… going back to the Science Alert article, it’s stronger in the US. They’re not seeing an improvement in placebo effect in other countries. The country where the culture is the most individualistic and atomised has the most physical pain because of various physical ailments, and has the most anxiety and depression, also has the most improvement in pain symptoms with placebos.

What I think is that humans are ritual creatures. Participating in rituals with families, communities and priests makes us feel better. Some will take wine and wafer as part of Sunday Mass with a man in a frock. Others will take a white pill as part of a surgery consult with a woman in a white coat. In each case, the adherents of the faith firmly tell us that what is being consumed has properties which will change once consumed, and change the person in the process. Any doubters are angrily dismissed.

Thank you again, JMG, for taking a complex conclusion and guiding a step by step, easy to follow path to understanding. The images are often hilarious and engaging.

Michelangelo: David is best portrayed as a static pose embodying the ideal structure of a human male in his prime.

Bernini: That’s, like, just your opinion man!

I don’t see how the exploding government debt and Detroit-style collapse are the fault of scientism. If the connection is that they’re all tentacles of the same hideously colorless eldritch beast of Elite Overreaching, fine. But all that seems only tenuously linked in this particular essay.

Continuing with military doctrine, you and commentariat might be fascinated by U.S. Air Force Colonel John Boyd’s collected works. Most of the items are formatted as slides for talks he delivered in person. Printed page 283, pdf page 290 (”Compression”) is a tour de force of synthesized concepts about conceptual synthesis. Boyd demonstrates far more humility in the face of the unknowable than the “trust the science” preachers of mass-media enchantment.

https://ooda .de/media/grant_hammond_-_boyds_discourse_on_winning_and_losing_a_newly_edited_version_with_an_introduction_afterword_index_and_bibliography.pdf

My college years were sold to me and my parents as promising the skill set and opportunities described by IBEW Lodge 18 # 3. Of course, no refund was available when I reached the end of doing as told, and there was absolutely no next step into a sustainable career path. My disillusionment… disenchantment… with doing as told by elites took a quantum leap, or relativistic jump, into new depths of disappointment around then!

For those looking for an entertaining walk through the foibles of the scientific priesthood and religion: the book Arrowsmith by Sinclair Lewis!

It allowed me to be ready for todays Ecosophia post and subsequent, “Of course!” moment while reading – and I read it 30 years ago when I was but a wee lad.

JMG, I respectfully request you consider a new rule for the comments sections on your blogs:

That they are places for human beings to discuss ideas developed, or explored for conversation here, by the minds of human beings alone.

And thus, that presentations of ChatGPT’s word salads, or other generative AI content, be entirely prohibited here, UNLESS the comment is clearly about an individual human’s evaluation of AI-spewed material. Not just copy and paste of what AI copied and pasted out of its archive given a prompt.

There are already other places on the internet for “let’s see what training contents got barfed up by The System, when I gave it my prompt.”

My favourite period in British history is the time when science really was indistinguishable from magic. As a kind of pilgrimage I’ve visited Merchiston Castle in Edinburgh, home of John Napier, and Dr. John Dee’s study in the former collegiate church in Manchester.

JMG

This gets into a specific cultural assumption with the way Faustian culture thinks (even in its philosophy) in relation to the past and future. It takes both to be as physically real as the sensory present which of course is debatable as both only exist per se on the other planes. I think from this arises its whole obsession with progress, precedent, ‘laws of nature’ etc and every single field of Western European thought has this implicit assumption and extension through time. It cannot be escaped it unless you for a second stop imagining that the past and the future exist.

I think this is perhaps the most clear differentiation between Faustian thought and whatever is arising in the USA because it seems to me that the heartland Americans certainly do not share this European obsession with past and future, and are in fact more in common with the Classical love of the present. Europeans deride Americans as ahistorical but that’s the whole point; the precise, lovingly recorded history as we know it is a European obsession. As is the focus on future worlds.

Many people fail to understand that science of itself does not, and never can, establish a particular view of the ultimate nature of reality. What it does – and this is one of the supreme cultural as well as intellectual achievements of mankind – is reduce everything it can deal with to a certain ground floor level of explanation. Physics, for example, reduces the phenomena with which it deals to constant equations concerning energy, light, mass, velocity, temperature, gravity and the rest. But that is where it leaves us. If we then raise fundamental questions about that ground floor level of explanation itself, the scientist is at a loss to answer. This is not because of any inadequacy on his part or on science’s. He and it have done what they can. If one says to the physicist “Now please tell me exactly what is energy? And what are the foundations of this mathematics you’re using all the time?” it is no discredit to him that he cannot answer. These questions are not his province. At this point he hands over to the philosopher. Science makes an unsurpassed contribution to our understanding of what it is that we seek an ultimate explanation of but it cannot itself be that ultimate explanation because it explains phenomena in terms which it then leaves unexplained.

Thank you very much for continuing the enchantment series!

Your insight that social determination of science has increased over time is glaringly obvious – once one has read it! The argument becomes even stronger when considering peer review for grant approval, which is less transparent and involves more judges than does peer review for publication. Nowadays, results can always be published somewhere, but it becomes more and more difficult to do a worthwhile experiment without a research grant. Many low-hanging fruits have already been picked…

Independent gentlemen (and rarely -women) doing experiments on their leisure time and money suffered much less influence from society at large, though they were of course also conditioned to a degree by their own social class.

As someone with a scientific background I concur. It always seemed odd to me that modern materialism took one of the many heuristics of renaissance occultism, stripped it of its original esoteric context and made into the only acceptable means of gaining truth. Why that one specifically I wonder. For fun I’ve tried speculating about a society where instead of the scientific method some other esoteric tool, say astrology was adopted as THE engine for truth by materialists. Big budgets building the Large Natal Chart Assembler to the tune of billions of dollars I suppose!

Cheers,

JZ

Most noteworthy Archdruid, your essay this week corresponds quite nicely with some thinking I’ve been engaged with, stimulated by a couple of recent comments over in your Open Post on Covid concerning Biomarkers. There was a suggestion there that human health is promoted by judicious sunlight exposure to one’s skin, and that since your body, among several things, manufactures Vitamin D during sunlight exposure, that the System™ has decided that low Vitamin D levels are BAD and higher Vitamin D levels are GOOD since people with higher Vitamin D levels are measurably healthier so therefore: we should consume supplemental Vitamin D to be healthier. But what if better health is the result of more-complex interactions, and Vitamin D levels are just an indicator, a biomarker? That might make supplementing with Vitamin D a fool’s choice, mistaking the map for the territory as it were.

So many other things pop out. High blood pressure? The numbers generated by athletes during heavy exercise would be assessed as cause for immediate admission to the ER. You’re gonna blow a blood vessel and have a stroke! But what if strokes are caused by WEAK blood vessels rather than higher pressure on said vessels? This seems a quite concise reason why likelihood of stroke is NOT reduced when taking statins, which definitely lower blood pressure (while having all sorts of other less-than-desirable consequences.)

The guide seems to be: if it’s easy to measure (Vitamin D level, blood pressure, cholesterol) then the medical and scientific community will quickly fixate on the measurement rather than seeking to understand the more-subtle reasons. Noting that, as you say, any given hypothesis may be useful (for a while) even when it’s not true, I conclude we’re in for quite a jolting ride as so many things which were declared as true-True-TRUE develop cracks and ultimately get thrown in the dumpster.

Best regards as always!

Your observation that the (old) enchantment was breaking down before the scientific revolution surely needs to be complemented by another observation that you have often made: that the (old) enchantment didn’t go away as far as text-books make it seem. The Royal Academy of Sciences pushing belief in material spirits, Newton’s astrology and numerology, the great scientist and mystic Swedenborg, Goethe’s alchemy, William James’ New Thought, Heisenberg and Schrödinger’s quantum mysticism…

Science: “Have I ever lied to you before”

Me: “How long have you got?”

I would always cringe when a Christian would say something like ‘see archeology proves the Bible’ (usually because archeologists found something that supported what was in there) as it seemed to me to be subordinating the ‘timeless word of god’ to the intellectual fashions of man. After all if you grant science the power/authority to ‘proive’ you equally grant them the same to cancel/disprove.

More recently if you use people’s faith in science to bolster their faith in your religion and they lose their faith in science … well let’s just say it isn’t helpful to your cause!

Alan, their sorcery apparently worked on a lot of people. I’m still not entirely sure why, though I have some hypotheses.

CR, a good point. Occam’s Razor in science is nearly the last word in anthropocentrism — a demand that the universe must be as simpleminded as human beings are.

Nachtgurke, thanks for these. The Jung-Pauli correspondence is to my mind particularly worth a close read.

Other Owen, funny.

Frank, thanks for this! I’ll have a look at it, if only to get more examples.

Ron, just one of the services I offer. 😉

Enjoyer, no need to apologize, it’s a useful comment. Was the Vallee book Messengers of Deception? That was where I first encountered the idea that the scientific method becomes useless if the phenomenon it researches is deliberately trying to avoid being understood. It’s a crucial point, and one with many applications.

Your Kittenship, nobody knows how they happen, just that they do. As for how long they take, it varies.

Gardener, I’ve been pleased to watch the rise of publication channels outside the peer review system, although of course that also has its problems. It’s one of the things that makes me hope that we can save something out of the wreck of institutional science.

Hackenschmidt, thanks for this. If you find anything more about changes in the placebo effect, please let me know — that’s a theme of some importance for the project of this blog.

Christopher, I’ll be exploring the connection between scientism and the failure of government in a future post. As for AI, I’ve been doing my best to eliminate AI-generated spew as it comes in.

Tengu, excellent!

PumpkinScone, that’s a fascinating point. I think you’re onto something very important here — a crucial indicator of the collapse of the Faustian pseudomorphosis in North America.

Robert C, exactly. Science is a source of useful models — what you’re describing as a ground floor of explanation. In the fields where it works — a minority of human experiences, but a significant subset — it’s better at producing useful models than any other gimmick our species has come up with. It’s no criticism of a hammer to say that it makes a very poor saw, and it’s no criticism of science to point out that it makes a very poor source of truths.

Aldarion, that’s a good point and one I should have included in the post.

John Z, now that’s an alternate-history story I’d read!

Bryan, excellent. That’s a crucial point, and reminds me of the old parable about the drunk who was looking for his keys under the streetlamp. Somebody asked him about the details, and he admitted that he’d lost the keys in the alley — but under the streetlamp there was enough light to look for them! In the same way, science fixates on what it can measure most easily even when that’s not the cause…

Aldarion, granted, and that’s a point I made earlier in this sequence.

Dreamer, and it’s likely to end up fatal to quite a number of causes as we proceed. More on this shortly!

“The models they’ve created do an excellent job of predicting the behavior of many things in nature—but again, that doesn’t make those models true. It just makes them useful.”

This is a profound point that is not well-taught in our education system. Too many believe science is fact. Or that scientific theories explain how the universe actually works as opposed to being our best guesses at how it works. All of our science remains abstractions about the universe–not the universe itself.

For me, realising this led me to a more scientific mindset where I thought far more critically about the science I was exposed to as opposed to blindly accepting it. The latter isn’t science but scientism–a new religion to replace the old ones.

I simultaneously came to think that science, when conducted as it is supposed to be, is one of humanity’s strongest tools for creating understanding, while also realising that science, as it is too often conducted in the contemporary environment, can be incredibly problematic. (I have seen the latter referred to as Science™.)

Usually, JMG, I couldn’t have written any of your essays because you think about the world quite differently than I do. But on this occasion, it is one of the few that aligns very closely with much that I have thought about over the past five years. Looking forward to future entries on this topic.

“ the enchantment of science is breaking down around us right now “

Indeed.

Another Ecosophia post synchronistic to my reading! I’m working through Lee Smolin’s Time Reborn. He’s a materialist physicist, but he does a fine job of pointing out the fallacies under which physics has operated since Newton, such as what he calls “doing physics in a box,” then pretending that the results can extend to the whole universe, a universe in which the measurer and the measurement tools are part of the system being measured, the starting conditions are unknown and unaccountable, and there is crucially only one UNI-verse, which means at a whole-system level there can’t really be any such laws, because a law is a general approximation observable across many different events, and there is no other universe to compare ours to, making anything at the level of the whole a mere observation.

He does a fine job of showing that contemporary assumptions can’t even stand up to an argument from the basis of physics, without having to resort to philosophy or magic (which further obliterates the assumptions).

Yes, I think it was Messengers of Deception. I have read Passport to Magonia as well.

Vallee is actually really polarizing in Ufology circles today. There is a divide between the materialist ‘nuts and bolts’ ufologists who believe the phenomena is advanced tech from another planet, and the ‘woo woo’ crowd who believe that the phenomena is psychic/spiritual.

I think both of those crowds are ultimately are driven by wish fullfillment, (which is what makes the debate so fierce) the ‘nuts and bolts’ crowd hopes we get access to all this alien tech and think it’ll solve all of our problems. The woo-woo side is hoping for a mass spiritual awakening.

I lean toward the woo-woo side of the aisle. But I don’t believe in a mass awakening. Whatever the phenomena is, it’s elusive and cryptic on purpose.

A bit off topic, John, but what do you think about crop circles? Are they all fakes? If not, what makes them?

Hi JMG,

I’m glad you brought up Johannes Kepler in this post, he’s an interesting figure to look into where enchantment is still present in his work. To add more to what Aldarion and John Z were saying, Kepler was also an astrologer for a Bohemian general. Kepler believed that there was an order to the heavens and even ascribed musical notes to the planets. He worked for Tycho Brahe (An alchemist) and used his observations to confirm that the planetary movements were ellipses. Even though Tycho Brahe’s model said the planets revolved around the sun, but the sun and the moon revolved around Earth. A little different than Copernicus’ heliocentric model. It’s an interesting story with lessons for us about competing ideas in science and the influence of existing ideas in science. I view Johannes Kepler as a conflicted man sitting on the fence between two worlds.

SD, the ideas in this post are not at all original to me. Thomas Kuhn and Oswald Spengler get most of the credit, and of course there’s always Nietzsche laughing mordantly in the background, and behind him Kant sweeping up the dust of our hubris and putting it into a neatly labeled bottle. I wouldn’t even bring them up here except that so few people have ever encountered them.

Blue Sun, now and then I remember Roddy McDowell as Mordred in Camelot, saying: “Your table

is cracking, Arthur. Can you hear the timbers split?” The enchantment of science was the greatest thing our civilization has accomplished; yes, it’s become an intolerable burden and a catastrophic source of bad advice, but there’s still a great deal of tragedy in its fall.

Kyle, hmm! I may want to read tht as time permits.

Enjoyer, oh, I know. Vallee and John Keel are two of the baddest of the bad boys of Ufology, and they’re also the two writers whose work I like the most. As for crop circles, “fakes” is really an unnecessarily harsh way to describe those marvelous works of performance art and ritual. Yes, they’re all made by human beings — by the time I visited Somerset in 2003 it was already an open joke among the locals that farmers were placing orders for the next year’s crop circles, since they were so lucrative to show to tourists . Have you read Jim Schnabel’s book Round in Circles? Worth your while.

Sean, Kepler was a fascinating guy. Did you know that he introduced several new aspects in astrology, alongside his contributions to astronomy? Like most astronomers in his day, he cast horoscopes to pay the bills. I don’t see him as conflicted, because in his day there wasn’t yet a conflict: astrology was understood as applied astronomy, and everyone used it.

At this link is the full list of all of the requests for prayer that have recently appeared at ecosophia.net and ecosophia.dreamwidth.org, as well as in the comments of the prayer list posts. A printable version of the entire prayer list current as of 11/8 may be downloaded here. Please feel free to add any or all of the requests to your own prayers.

If I missed anybody, or if you would like to add a prayer request for yourself or anyone who has given you consent (or for whom a relevant person holds power of consent) to the list, please feel free to leave a comment below.

(Also, if you think you might be interested in having anyone pray in support of your own self-improvement, please have a look at the Ecosophia Prayer List Autumn Special.)* * *

This week I would like to bring special attention to the following prayer requests.

May the lawsuit for partition of the family land in which Jennifer, her husband Josiah, and her father Robert are involved be resolved justly and for the greatest good of all involved, including the land and its spirits.

May the brain surgery that Erika’s partner James underwent for his cancer on October 16th have gone successfully; and may he be blessed, healed and protected, and successfully treated for all of his cancer.

May Kyle’s friend Amanda, who though in her early thirties is undergoing various difficult treatments for brain cancer, make a full recovery; and may her body and spirit heal with grace.

May Jeff Huggin’s friends Dale and Tracy be blessed and healed; may Dale’s blood and spinal fluid infection clear up sufficiently to receive a heart valve replacement; may his medical procedures go smoothly and with success; and may Dale and Tracy successfully surmount these difficulties.

In the case of Princess Cutekitten and the large bank who is suing her may justice be done, with harm to none.

Lp9’s hometown, East Palestine, Ohio, for the safety and welfare of their people, animals and all living beings in and around East Palestine, and to improve the natural environment there to the benefit of all.

* * *

Guidelines for how long prayer requests stay on the list, how to word requests, how to be added to the weekly email list, how to improve the chances of your prayer being answered, and several other common questions and issues, are now to be found at the Ecosophia Prayer List FAQ. If there are any among you who might wish to join me in a bit of astrological timing, I pray each week for the health of all those with health problems on the list on the astrological hour of the Sun on Sundays, bearing in mind the Sun’s rulerships of heart, brain, and vital energies. If this appeals to you, I invite you to join me.

Excellent essay today, JMG! I’m going to draw Arthur Firstenberg’s (author of The Invisible Rainbow) attention to this, at the risk of it depressing him more than he is already. He was the one who first pointed out to me 25 or so years ago that it doesn’t seem to matter one bit how much evidence of harm from EMFs (specifically, artificial, polarized, pulsed, modulated with random variation in the ELF range) exists, the Powers That Be have a broad brush they can paint it all with as “tabloid pseudoscience,” with examples of such publications available at checkout counters everywhere for all the world to gawk at. I was naive enough then to think that with so much evidence, it would just be a few years before people caught on.

I was going to hold off mentioning a paper by Panagopoulos et al. (2021) until next week, but now I don’t have to. I am working on a summary of their highly technical paper with a couple dozen mathematical formulas describing in detail the mechanism of how these EMFs can exert a non-thermal effect on cellular biology via the voltage-gated channels that help cells maintain a proper electrolyte balance. (Another possible mechanism of DNA being more conductive than thought is favored by Firstenberg, but the voltage-gated channels hypothesis also accounts for DNA damage via oxidative stress.).

The reason the activists consider Panagopoulus et al. to be revolutionary is that we would point out all the in vitro, in vivo and epidemiological evidence of harm only to be told there was no possible mechanism for it, end of discussion. Now there is a well-described, documented (shy of 300 references) mechanism. (I’ll post the summary once I’ve got it hashed out, but anyone with a technical background can have a look at their paper.)

So they’ll just move the goal posts again. If our scientists posed any real threat to the status quo, they’d be jailed together with the journalists.