Most of the time, in writing these essays, I try to treat the decline of industrial society with the seriousness that it deserves. Sometimes, though, the plain raw absurdity of our current situation rises to a point that only raucous laughter can address. I ran into another of those points a few days back, while reading an article on Yahoo News sent to me by a longtime reader and commenter—tip of the hat to David By The Lake. The article is by Hasan Chowdhury, and its title is “Humanity is on the brink of major scientific breakthroughs, but nobody seems to care.” You can read it here.

Chowdhury’s article points out that recent news stories about the latest heavily promoted claims of a breakthrough in nuclear fusion research, and the much-hyped announcement by two South Korean researchers that a room-temperature superconductor had been discovered, didn’t get the response the media expected. By and large, people yawned. To Chowdhury, this is appalling, and he argues that two factors are responsible. The first is that people in the hard sciences need to be better at publicity. The second is that too many people out there suffer from an irrational fear of progress, and simply need to be convinced that the latest gosh-wow technologies will surely benefit them sometime very soon.

Yeah, that was when I started laughing too.

Let’s start by talking about the two supposed breakthroughs Chowdhury talks about. The first is the claim that yet another team of fusion researchers has achieved net energy gain—the point at which the energy coming out of a fusion reaction is more than the energy put into it. This was first achieved in 2014, and a handful of other research teams have managed it in the years since then. Is it a step in the direction of commercial fusion power? Sure, in exactly the same sense that bouncing high on a trampoline is a step in the direction of landing on the moon.

The net energy gain in question, to begin with, is only a gain if you compare the output of the laser beams used to kindle the fusion reaction with the energy released by the reaction itself. It takes far more energy to fire up those lasers than you get out the business end, and so far fusion reactions have not even achieved the energy output they need to power their own lasers. And the other energy inputs needed to build, run, and maintain an experimental fusion reactor? Those aren’t included in the net energy figures either.

Nor, of course, does any of this affect the astonishingly dismal economics of fusion power. The reason that commercial fission, the other kind of nuclear power, is dead in the water these days is not that it doesn’t work—it’s that it’s so expensive that nuclear reactors can’t pay for themselves without gargantuan ongoing government subsidies. Fusion reactors are several orders of magnitude more complex and expensive than fission reactors. This means that even if some future fusion reactor can get positive net energy compared to all its energy inputs, it’s still an expensive stunt, not a source of grid electricity that any country anywhere in the world can afford. Of course Chowdhury doesn’t mention this; nobody pushing fusion hype ever breathes a word about the economics of what promises to be, even if it works someday, the world’s most hopelessly unaffordable power source.

The second breakthrough Chowdhury wants us to get excited about is the claim that a room temperature superconductor has been invented. A superconductor, for those of my readers who went to American public schools and therefore got no scientific education worth mentioning, is a material that conducts electricity with effectively no resistance. Existing superconductors have to be cooled to a few degrees above absolute zero and subjected to various other complex conditions, which limit their usefulness. (Superconductors are heavily used in experimental fusion reactors, for example. Is the energy needed to cool them to working temperatures factored into those net energy figures? Surely you jest.)

So why hasn’t this announcement been met with gladsome cries? Because for decades now the media has been full of exciting new scientific breakthroughs that turned out to be bogus. We’re constantly being told that this or that or the other wonderful technological revolution is about to happen. It’s the follow-through that deserves attention here, because the vast majority of these announcements are pure hype, meant to separate fools from their investment money in the time-honored fashion. As it turns out, the room temperature superconductor seems to be another example of this kind; repeated attempts by other labs to get the same results have failed, and so it’s pretty clear that the research team that made that claim was either mistaken or lying.

This sort of thing is far more common than the cheerleaders of science like to admit. Retraction Watch, the most widely respected organization tracking scientific fakery these days, estimates that more than 100,000 papers should be retracted each year; the actual number in 2022 was less than 5500. Of retracted papers, four-fifths on average are withdrawn due to scientific fraud. You know those claims that scientists can be trusted to police themselves, and will drive fraudulent researchers out of the business? Think again. Retraction Watch lists some researchers who have had more than a hundred papers retracted, and are still happily employed in their laboratories turning out junk science.

Until relatively recently this was treated as an internal problem within the scientific community. The difficulty scientists face is that now it’s a public scandal. A very large number of people outside the sciences are well aware that scientific opinions are for sale to the highest bidder, that a great many scientific studies are fraudulent or simply wrong, and that in a great many cases, scientists simply don’t know what they’re talking about. They know this, in turn, because their noses have been rubbed in it over and over again by public policies promoted by scientists the failed abjectly to live up to the hype.

It’s ironic that Chowdhury should have chosen fusion power as one of his examples, because it’s also one of the biggest offenders here. You can get a belly laugh quite reliably in many parts of today’s America by saying in an earnest voice, “Fusion power is just twenty years in the future!” The reason, of course, is that experts have been saying these words in exactly that tone since the 1950s. Most people realize at this point that it’s never going to happen; most people figured out a long time ago that the fusion researchers who say this are simply angling for another round of government money to flush down their high-tech ratholes.

The fact that scientists, politicians, and the media still pretend that commercial fusion power is possible is thus an important factor in the collapse of public confidence in expert opinion of all kinds. The narrative that scientists, politicians, and the media are pushing—“fusion researchers are closing in rapidly on a wonderful new power source for everyone”—has drifted much too far away from the narrative that the facts are telling—“fusion researchers are spinning their wheels uselessly, but they don’t want to admit it since their income depends on claiming otherwise”—and more and more people are coming to believe the second narrative.

It’s far from the only offender along these lines. At least as much credit has to be given to the scientific rhetoric around climate change. Before we go on, I want to point out that yes, the global climate is changing; yes, there are serious problems caused by the current pace and direction of climate change; and yes, greenhouse gases produced by human activity play a role in the shift in climate we’re experiencing. Those three points are important, and in an upcoming post I’ll be discussing them in considerably more detail, but they don’t begin to justify the shrill claims that have been made by climate scientists in recent decades.

The parade of failed doomsday predictions by climate scientists has become so embarrassing that you can find entire chronologies online listing inaccurate claims made by experts, and comparing them to what actually happened. Somehow, despite those claims claims splashed around by Al Gore et al., the Arctic Ocean is not yet ice-free in summer—that was supposed to happen years ago, according to the hype—and polar bear populations are rebounding as the bears do what Darwin predicted and adapt to changing environments. Again, this does not mean that global climate change isn’t happening. It means that the experts know a lot less about climate change (not to mention polar bear ecology) than they think they do. What that means, in turn, is that a growing number of people are responding to the latest dire pronouncements of climate activists by rolling their eyes and walking away.

Hasan Chowdhury also has his equivalents in this field. I’m thinking here especially of a recent article by Rebecca Solnit titled “We can’t afford to be climate doomers,” which you can read here. She insists that it’s wrong for people to assume that nothing can be done about climate change—why, if we all clap our hands in unison and believe, surely Tinkerbell can be saved! It’s interesting to compare Solnit’s earnestness with the equally earnest claim by Greta Thunberg in 2018 that if we didn’t give up all fossil fuels within five years, humanity would be doomed to certain extinction. If the scientists Thunberg cited were right, it’s already too late; if they were wrong, why should we believe the rest of what they’re claiming?

Solnit is incensed that “the comfortable in the global north”—that is to say, the privileged classes to which she herself belongs—are increasingly discouraged about climate change. She insists that all we have to do is embrace the same remedies she and her fellow activists have been pushing all along: political action, allegedly green technologies, and the demonization of fossil fuel companies. The difficulty, of course, is that those supposed remedies have not just failed to achieve their goals, they’ve failed to have any effect on climate at all.

After all, despite climate treaties, green technologies, activists throwing public temper tantrums about climate, and the rest of it, fossil fuel use and the CO2 concentration in the atmosphere both continue to climb steadily. All those wind turbines and solar PV farms haven’t even slowed the global increase in fossil fuel consumption, much less replaced any noticeable amount of fossil fuels with green energy—in point of fact, world consumption of coal, the dirtiest fuel of all, hit an all-time record last year, up 3.3% from the year before. The narrative Solnit is pushing amounts to “climate protesters are heroes saving the earth from evil fossil fuel companies,” but the narrative that the facts are telling is “climate protesters are a pampered subculture engaging in meaningless virtue signaling while ignoring their own carbon-laced lifestyles.” Once again, it’s the latter narrative that’s become more convincing to people these days.

What exactly have the last three decades of climate protest accomplished, after all? That’s a question you’re not supposed to ask. Of course, mutatis mutandis, that’s the question you’re not supposed to ask about fusion—what have all those billions of dollars of investment in fusion reactors accomplished so far?—or about a long, long list of supposed breakthroughs and dangers the media is still pretending to take seriously. No matter how many times the same hype has been disproven by events, no matter how many supposed experts have tripped over their own predictions and bloodied their noses on the hard pavement of reality, the rest of us are supposed to place blind faith in whatever they happen to say this time around.

There, in turn, is where it’s possible to glimpse the chasm opening beneath the feet of today’s corporate-managerial aristocracy.

Every society depends for social cohesion on the widespread acceptance of a shared narrative about authority. In the European Middle Ages, the narrative held that kings were anointed by God to do the work of leading God’s people, and that divine sanction cascaded down the feudal hierarchy through dukes and barons and knights and peasants all the way to the swineherd leading his pigs. Everyone knew that plenty of kings, and for that matter plenty of swineherds, didn’t live up to the image the narrative assigned them. As long as the narrative remained in place, even the political radicals of the time thought in terms of getting each person to fill their assigned roles, rather than tearing down the feudal structure itself.

The medieval narrative was durable precisely because it wasn’t vulnerable to objective disproof. If the king was a brute or a nitwit, as of course he was tolerably often, that just showed that God was irate and had sent the people a bad ruler as punishment for their sins. The early Protestant-capitalist narrative that replaced it was equally immune to disproof. God (or his faux-secular equivalent, the almighty market) had assigned the rich their riches and the poor their poverty as a sign that the former were pleasing in his sight and the latter were not, and the remarkably arbitrary nature of divine favor was hardwired into Protestant ideology from the early days of the Reformation onward.

But the Protestant-capitalist narrative gave way in the wake of the Great Depression to a new narrative of expertise. According to that new narrative, bureaucratic and corporate meritocracies had received the secular equivalent of divine favor because they were the smart kids in the room. Their university degrees and their successful ascent of organizational hierarchies proved that they were better suited to run the world than anyone else, and they were expected to demonstrate that in practice by pursuing policies that worked.

At first, that wasn’t much of a problem, because the kleptocratic investment class that ran the country before the Depression had made such a mess of things that almost anything would have been an improvement. Later on it became more difficult, when real world problems—cough, cough, Vietnam, the War on Poverty, etc.—turned out to be much more recalcitrant than anyone in the managerial class thought. There was a trap hidden within their rhetoric, however, and it turned out to be a trap from which they could not escape.

Central to the core narrative of the entire industrial world during the managerial era was the insistence that sometime soon everything would change. The world we all knew would be replaced by something else—by a shiny Utopian Tomorrowland, if we all gave the experts everything they wanted, and by a smoking postapocalyptic wasteland if we didn’t. That was the message that scientists, politicians, and the media rehashed endlessly: tomorrow may be wonderful or it may be terrible, but it will not be the same as today.

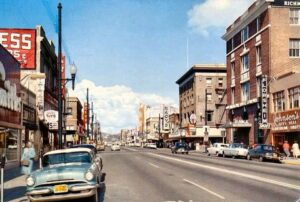

The fact of the matter is that both the promise and the threat turned out to be bogus. As Peter Thiel famously said, we were promised flying cars, and what we got instead was 140 characters and an easy way to circulate cat pictures around the world. Chowdhury himself, in the article cited above, quoted a venture capitalist complaining that no matter how wonderful and exciting and cutting-edge the imaginary world of computer imagery looks, as soon as you return to everyday life things change utterly: “The minute you get into a car, the minute you plug something into a wall, the minute you eat food, you’re still living in the 1950s.”

Except, of course, you’re not—not unless you’re a wealthy venture capitalist, or for that matter a comfortable media flack, and in either case you can afford to ignore the explosive crapification of modern life. In the 1950s an ordinary unskilled laborer could count on earning enough money to stay fed, clothed, housed, and supplied with the other necessities of life. In the 2020s even skilled workers have to struggle to do these things. Compared to that unchanging reality, all those promises of a shiny new future about to dawn any day now look like vicious jokes. Even the thought of a sudden apocalyptic end to the system has begun to lose its appeal—and yes, it has considerable appeal to those who have been assigned the short end of the stick by the current system. The apocalypse has pulled so many no-shows at this point that the disaffected are no longer counting on it to free them from the dead weight of an unbearable situation.

What we see around us is a society caught in the throes of futurus interruptus, denied both the orgasmic release of the Tomorrowland future and the even juicier equivalent of its apocalyptic twin, waiting in increasing frustration for a fulfillment that’s endlessly promised but never arrives. That’s the time bomb ticking away at the heart of the system. Chowdhury, Solnit, and their many equivalents in today’s media are right to be terrified at the increasingly widespread refusal to put any more faith in the same tripe that’s been shoveled forth by their equivalents since the managerial aristocracy seized power not quite a century ago. Once the central narrative breaks down, after all, the end of the existing order of society is a foregone conclusion.

That end need not involve vast amounts of bloodshed. Wars of independence tend to be hard-fought, but domestic revolutions very often involve only token violence. What happens instead—in France in 1789, in Russia in 1991, and in many other cases—is that a system that has been hollowed out by a string of cascading failures runs into one more crisis than it can tolerate, and implodes under the weight of its own absurdities. We are much closer to such scenes in North America and Western Europe right now than I think most people realize. Every belly laugh called forth by the drivel that Chowdhury and Solnit expect us to believe brings us closer still.

* * * * *

A glance at the calendar reminded me this morning that August has five Wednesdays, and it’s a long-established tradition on this blog that the readers get to vote on the topic for my post for the fifth Wednesday of any month that has one. The ball is in your court, dear readers. What do you want to hear about?

May or may not be OT:

http://historyunfolding.blogspot.com/

The domestic revolutions themselves may have low death tolls, but revolutions that are followed by civil war, insurgency or foreign wars tend to have the death toll go through the roof, potentially in a big way. I’ve heard estimates along the lines of 9 million people for the Russian Civil War. And the civil war surrounding the mexican revolution killed a lot of people when harvests got destroyed, and in France the revolution was followed by a civil war in one area of the country and foreign wars that eventually went (literally) Napoleonic. And then there’s the Chinese civil war/chinese revolution, which was very heavily tangled up with WW2 so it is hard to tell who of the 15?20? million people who died were killed by what.

When having a revolution, you really want to avoid letting a civil war follow it. That is where the horrors lie…

Right along with the collapse in confidence in science, for the reasons that you have mentioned, is the collapse in the actual scientific and technical knowledge of the population.

Our k-12 schools and higher eduction have been hollowed out, with fewer and fewer students pursuing degrees in technical fields ( coding is not science). Many people who missed out on a useful education in science and math now try and substitute learning from podcasts and such. I have several acquaintances who would not know the First Law of Thermodynamics from the Magna Carta but think themselves experts on travel to Mars, the build-out of the grid with renewable energy and the bright future of solid state batteries. Combining the decline in confidence in actual science ( what little of it that there now is) with a growing confidence in junk science ( or junkier) is a combustable mix.

All the friends I went to engineering school with ( the old days ) are as skeptical as I am of the claims of fusion, full scale renewable energy etc. because none of these things make technical sense when subject to even a simple investigation. But the new ” podcast scientists” are gung ho and will face a much more devastating wake up call when the curtain is finally pulled back on the wizard.

I saw the article on room temperature superconductors and didn’t bother to read it. There was a time when I would have read it with fascinated enthusiasm. I figured that if it turned out to be a game changer, I’d be hearing about it again in the future, and if I didn’t, then it was just another flop. And most things of that type don’t seem to live up to their billing these days, especially the most ballyhooed ones.

Dear JMG, let’s give this decadent-degenerate class the answer it deserves: Collect them all like a ‘Witch Hunt’ and throw them in jail! Take back everything that they have taken from us and tried to take from us and give them nothing! They always despised us hahaha now it’s our turn!

If you want belly laughs, I was going to send you this one I found a couple days ago, but I didn’t want to add a late, pointless comment to an old post. https://quillette.com/2023/08/06/ais-will-be-our-mind-children/ In amid his strange argument that what happens to our real children doesn’t matter since AI are our true descendants, he takes the time to complain about our lack of flying cars, free nuclear energy, and infinite wealth – it’s all because technological reactionaries and luddites fear change, you see! And they impose unnecessary and pointless regulations that stifle new technologies. Never mentioned is why, as you say, the filthy masses might have come to distrust change, or for that matter whether or not the populace in a democracy is allowed to vote themselves any regulations they may dang well feel like into existence.

For another belly laugh: Last summer, when I was in a bpokshop, I saw a book about some political correct subject (I don’t know anymore, what that was), and as I looked at the authors, I saw that they were none other than Greta Thunberg and the Dalai Lama. A rrally weird pairing, I thought: a climate activist and a Tibetan lama.

JMG,

Excellent post as usual. After the post on Stormtrooper Syndrome, I was expecting a post on one of the other syndromes so rampant in our society today. I guess maybe you did – perhaps this post could be subtitled Wile E. Coyote Syndrome, since our super genius scientists keep chasing the Roadrunner of sustainable and affordable cold fusion or room temp superconductors, but can never quite catch him and instead run off a cliff and/or are smashed by a boulder or anvil (i.e., reality). In spite of all this, ACME (US Govt) keeps sending the funding and supplies.

For fifth Wednesday, my vote is for the topic of karma.

Thank you for the reality check today. I vote for your view on Jung and the occult for the last Wednesday in August blog.

I can’t help notice that the ChatGPT example he cites is one that seems to me to promise more harm than good. Goodbye plenty of people’s livelihoods. Hello more academic cheating, more easily. Seriously, does anyone actually do their homework themselves these days? And if they don’t, are they remotely competent at the things their diplomas say they should be?

Believe it or not, there’s been a running argument in the fanfiction community by the people who run the websites, over whether AI written stuff should be allowed, what precautions if any should be taken to avoid people’s work being scraped by AI etc. It seems to have been won by the AI positive group, so I’m left wondering if some of the stuff I’m reading was written by a bot. I’m also wondering why those of us who actually write the fanfiction were never asked our opinion. Probably because we didn’t want AI written stuff and they didn’t want to know since they weren’t sure how to prevent AI written stuff from being posted because they can’t tell it apart.

A lot of the fun of fanfic is interacting with your favorite writers and with your readers. That needs real people on both ends to be meaningful.

The academic types really are lining up to get the ostracon treatment, aren’t they? Perhaps the mob will use broken smartphone screens instead of seashells on our modern, doubtless purple-haired Hypatia.

For myself I am at a loss. The practice of science needs good people, but is absolutely unable to select for them. You can’t even advocate selecting for them. “Best we can do is not hire white men.” Being a white man with — I think!– a sense of integrity, I admit a bit of sourness over what has happened to my once-beloved vocation. Gave up with a masters’ degree in a hard science, and spent the last decade or so working in a science museum. (Because even back then “pass over white men” was very much on order for grant approvals and hiring committees, if only implicitly; now of course they proudly say it out loud.) Of course the whole place is to me a Saturnalia in the name of Progress — a revel drunk on its ideology, totally backwards my own values. I’m not sure who has changed more: me, or the zietgiest following NPCs I work with. I’ve burnt out on that so badly I started fantasizing about doing a demonstration on the nature of gravity with a rope around my neck in a high place in front of the building — brr! Time to go. I haven’t found other work, but they’re getting my notice regardless. Better a notice than a noose.

A nibble on the old networking pointed me towards the federal bureaucracy. The job add had several times as much verbiage about “equity” and what type of human they were looking for than what they actually wanted that human to do. So I can’t imagine why they’d pass it on to a white man, unless they have poor reading comprehension or just don’t realize these nitwits are actually serious. I do wonder if these people realize the deep well of resentment they’re filling with all this? I’m quite certain Canada will end like Yugoslavia because of it. I only regret that the ones sowing the deepest seeds of discord will almost certainly flee the country and escape the whirlwind.

Does anyone know if there are any Red States hard up enough for teachers they might be sponsoring green cards? I do not have the certification — but I am a very, very good educator.(Given the state of our universities, it’s probably because I lack the B.Ed that I can teach well, not in spite of it.) I don’t say that to toot my horn, simply that I’ve had to recognize that it’s really the only thing I’m good at. If I could find a way, I’d take that talent somewhere that less obviously despises me. As bad as those “Rich Men North of Richmond” are, I don’t think they hold a candle to the oligarchs in Ottawa.

I’d like to add that the population of the Untied States of America (TM) has gone from 151 million in 1950 to 340 million in 2023. This simple fact is utterly ignored by virtually everyone and it goes a long way toward explaining many of the predicaments this continent now faces, from the ecological devastation of our forests and farmlands to the cost of housing, to the price of energy and even perhaps the precipitous decline in fertility. All of these were made worse by greed and stupidity at the highest policy making levels. (Does anyone else trace the tipping point of our society to the 1980 presidential election of Ronnie Raygun?)

5th Wednesday vote: Past lives/reincarnation – especially in regard to psychosis

““The minute you get into a car, …,you’re still living in the 1950s.”

My daughter’s Prius refutes that assertion. :-). That said it does have two pedals on the floor and a steering wheel. And a several hundred page instruction manual and a second manual on how to run the “infotainment” system.

If you look under the hood you will know true despair. But it is getting over 50 miles to gallon. The complexity cost of that level of efficiency is mind boggling.

The superconductor turned out to be an odd ferromagnetic material. Much hype over nearly nothing, something may be learned as that material is not supposed to be magnetic, so it’s still worth looking into.

As for the real superconductors at the fusion test facility, they are having problems with sudden loss of superconductivity, or quenching,

“If a quench is confirmed, the switches on large resistors connecting coil and resistors are thrown open and the magnetic energy of the coil is rapidly dissipated, avoiding any damage to the coils. For the toroidal field coils that have the largest amount of stored energy, 41 GJ, achieving total discharge can take about one a half minutes.”

https://www.iter.org/newsline/278/single

41 GigaJoules is 9.8 tons of TNT (the internet has unit converters for everything) so if the quench control system fails, well, it won’t be pretty.

Having fought through the academic system and gotten a PHD and seen what it was like, and then choosing to go back to industry, publish or perish is still the rule. As the supply of “new physics” has dried up, the need to publish something along with the desperate scrabbling for funding with ever less applicability to the practical world has led right to the well reported “replication crisis”. There is only one Large Hadron Collider and one James Webb Space Telescope. Their time is fully booked, so what is a newly minted PHD to do?

In China the government is sending them off to grow rice. This is not going down well with the students.

Probably the best thing we can do as humanity is to burn all fossil fuel we have left but cautiously and in decreasing speed. Save it for a few generations. All green “solutions” drink only more carbon. Prepare for more rain, drought and sea level rise or whatever. Build a society less dependent on technology where we sing in choirs, make love and unite with wild nature (what is left).

What is your take on AI? My dream is that it gets so intelligent it destroys itself. A kind of technotic sepukko. Internet dies and people wake up. But that is just a dream. Probably something much evil will happen. Do you think we humans in modern societys already are part of AI? How has the digitalization of our lives changed our consciousnesses?

Thanks for your excellent essays. Your magic stuff I can’t follow unfortunately but I really enjoy your wisdom in other issues. Great to see you in Unherd!

God bless and go with Gaia!

People across the Western world are grasping for solutions for why the world has become so sh*t and have found a diverse range of ideologies, solutions and people to blame. Resource and energy depletion, diminishing returns on research and innovation and even environmental damage are massively downplayed. It’ll be interesting to see what happens when a system has become so obviously inept and is hated by so many, but there are such diverse views of how to deal with it and what to replace it with.

I suspect that much of the mass immigration into the west engineered by the Western elites and the associated promotion of critical theory (hardly a better ideology to divide people) has been done specifically to create divided populations of plebs fighting each other and blaming each other for their respective hardships rather than one united population capable of seriously threatening the system (look at how the establishment started pushing critical theory in its various ghastly iterations after enough people protested in movements like Occupy following the 2008 debacle). If you get people fighting on race lines or over nonsense theories about gender then the establishment can take the side of one group that could challenge them and pit them against another.

Very clever, but very very evil.

Lithium batteries are another example of “Long on hype, short on delivery” press releases. I spent a while rather deep in the “small lithium pack” space (rebuilding failed and worn out ebike batteries), and people would regularly send me one press release or another about this or that “order of magnitude” improvement in some aspect of the batteries, asking what I thought.

I gave the same response I always did: “If even half of these turned out to be true, I’d be able to run an ocean liner across the ocean on the energy stored in a block the size of my cell phone that I bought with spare change from my couch.”

Either the fancy new hyped feature was so isolated that you couldn’t build a battery with it, or the cycle life was beyond awful (if they show charts out to 20 cycles in the paper, it’s useless in any practical application), or it was impossible to build at a useful cost, or… pick your way they never quite managed to revolutionize batteries. Batteries keep improving, incrementally, but almost none of the hyped “revolutions” have come about.

I also ran into a lot of “Well, sure, we’re hitting the bounds of lithium, but clearly there’s some other battery technology we just haven’t discovered yet!” thinking – because we want something better, and claim we need it, why, the universe is just obligated to deliver it to our doorstep!

Physics is a harsh mistress.

As far as the latest fusion-related stuff goes, the Do the Math blog has pretty thoroughly debunked it here: https://dothemath.ucsd.edu/2023/08/fusion-foolery/

TL;DR: The electricity generated would probably keep the lights on in the building where it was done for less than a minute.

At some point while reading this the phrase “scientific folklore” came to mind. That probably does an injustice to folklore.

Ah yes, the alleged superconductors. After the news came out, some already began to have feverish dreams about the sheer infinite possibilities that this would bring. It would revolutionize mobile technology – a market of so and so many billions. It would revolutionize industrial production – a market of many more trillions. All, of course, powered by the coming green energy transition.

One could feel how much those who believe in eternal progress longed for it to be true.

Technological miracles – that is, world-changing breakthroughs – have become rare in recent years. And very slowly, skepticism about the myth of eternal progress seems to be growing. After all, many of the wonderful promises turned out to be false.

What a pity that technological progress is still seen by so many as the only solution to our many social and other problems.

Hi JMG & commentariat, I would like to see a commentary on the Zen Ox herding pictures as it relates to spiritual development.

Someone posted on ZeroHedge:

https://en.m.wikipedia.org/wiki/Turbo_encabulator

The Turbo Encabulator:

“The original machine had a base-plate of prefabulated aluminite, surmounted by a malleable logarithmic casing in such a way that the two main spurving bearings were in a direct line with the pentametric fan. The latter consisted simply of six hydrocoptic marzlevanes, so fitted to the ambifacient lunar waneshaft that side fumbling was effectively prevented. The main winding was of the normal lotus-o-delta type placed in panendermic semi-bovoid slots in the stator, every seventh conductor being connected by a non-reversible tremie pipe to the differential girdlespring on the “up” end of the grammeters.

— John Hellins Quick, “The turbo-encabulator in industry”, Students’ Quarterly Journal, Vol. 15, Iss. 58, p. 22 (December 1944)”

“The minute you get into your car, the minute you plug something into your wall, the minute you eat food, you’re still living in the 1950s.”…Damn right, and it was great! Born in ’46, the ’50s were my formative years, and for middle class white Americans, these were good times indeed. Little Richard and Jerry Lee Lewis shocking the cultural establishment, Hula Hoops, the emergence of pizza pie, outlandishly huge automobiles with weird futuristic tail fins (presaging the space age) making out in the balcony at the movies, Bugs Bunny in drag, TV, ‘progressive’ Jazz, Mickey Mantel, Nakita Khruschev…the list goes on. It was all good, and all a sort of circusesque cartoon – a decade that parodied itself…Of course, it marked the birth of the Interstate Highway system – not so good.

Here is a musical offering to commentariat, the track Junk Science by Deep Dish from ye olde 1998.

https://www.youtube.com/watch?v=6KLNcmwxDnM

“What kind of music did you used to listen to grandpa?”

“There was this stuff called electronic music, they made with machines…”

I recall that after the last grand conjunction of Saturn and Jupiter, you cast an astrological chart which predicted a shift away from democratic governance, as well as a rejuvenation of the arts. It seems that the first part is rapidly manifesting itself, but what evidence, if any, do you see of a rejuvenation in art, music, movies etc

Here are all of the requests for prayer that have recently appeared across the Ecosophia Community. (A printable version of the entire prayer list may be downloaded here.) Please feel free to add any or all of the requests to your own prayers.

If I missed anybody, or if you would like to add a prayer request for yourself or anyone who has given you consent (or for whom a relevant person holds power of consent) to the list, please feel free to leave a comment below or at the prayer list post linked at the very beginning of this notice.

* * *

This week I would like to bring special attention to the following prayer requests.

Lunar Apprentice, that he find the strength and the capability to successfully fulfill his self-appointed duties both to his patients and to his family, that the insurers contracting with his patients be favourably disposed towards the service he faithfully provides and pay as they are obliged, and that the flow of new patients increase sufficiently to support his medical practice.

Nebulous Realms has reason to think they may be in particular physical or spiritual danger for the next couple of weeks (posted 8/7); they request prayers for their personal safety.

Steve T’s brother Matt is currently in the hospital after a sudden violent seizure, and his daughter is having extreme panic attacks; they were both in a terrible car accident last fall. Steve asks for prayers for Matt’s recovery of health; for the emotional and psychological well-being of the rest of the family, including his wife Megan, his daughter Diana, and his young son Jake; and for the lifting of any spiritual harm afflicting the family.

Freddy, Ganeshling’s neighbor’s 10 year old son, hasn’t spoken since a traumatic hospital stay a few years ago; for Freddy to start speaking again and to help him develop into a functional adult.

Tamanous’s friend’s brother David got in a terrible motorcycle accident and has been diagnosed as a quadriplegic given the resultant spinal damage; for healing and the positive outcomes of upcoming surgeries and rehabilitation, specifically towards him being able to walk and live a normal life once more.

Lp9’s hometown, East Palestine, Ohio, for the safety and welfare of their people, animals and all living beings in and around East Palestine, and to improve the natural environment there to the benefit of all. The reasonable possibility exists that this is an environmental disaster on par with the worst America has ever seen. (Lp9 gives updates here and also here.)

* * *

Guidelines for how long prayer requests stay on the list, how to word requests, how to be added to the weekly email list, how to improve the chances of your prayer being answered, and several other common questions and issues, are now to be found at the Ecosophia Prayer List FAQ.

If there are any among you who might wish to join me in a bit of astrological timing, I pray each week for the health of all those with health problems on the list on the astrological hour of the Sun on Sundays, bearing in mind the Sun’s rulerships of heart, brain, and vital energies. If this appeals to you, I invite you to join me.

I started laughing at the end of the first paragraph. You can’t not laugh at the title of the article.

Yes, they’ve been promising breakthroughs in fusion for as far back as my memory goes (I’ll be turning 69 next month) and it’s becoming painfully obvious that’s all it is; just promises, like the moon colonies, flying cars, magic bullet cures for cancer, etc, etc.

Give us some worthwhile results and maybe we’ll stop yawning.

Almost all the books I bought during the Seventies, Eighties and Nineties and early 00’s such as the State of the World and various Lester Brown efforts, are gone now. After reading through the earlier books and comparing them to the later ones, it finally dawned on my dim flickering 10-Watt mentality that not only had nothing really changed, nothing probably would be done about the problems they kept fulminating about. Sure did free up a lot of space. The sole survivor is Limits to Growth.

“Science” jumped the shark 20 years ago.

We’ve transitioned from “peak expert” to “post expert”.

It does beg an interesting question though.

What constitutes an expert?

In the olden days, it used to be when the results objectively measured and repeatedly validated the falsifiable hypothesis.

I recently read a biography of Enrico Fermi. The size of the industrial facilities supporting the Manhattan Project was astounding. And yet all that effort produced three (three!) fission devices. I guess it set a benchmark for what was to come…

I’m certainly no believer the “smoking postapocalyptic wasteland” vision of the future, but I must admit, I thought the slow descent into a new dark age would be just a little bit faster than this. The last year has felt so stagnant in so many ways that even the long descent seems like a no-show. It sometimes almost feels like this socioeconomic purgatory will last forever… or at least for the rest of my life. I keep waiting for the dam to burst – and I’m partly glad it hasn’t yet – but the antici…pation is killing me! It’s like when you’re really sick, and you just wanna puke your guts out and get it over with, but the pain and sickness just linger on and on for days. I definitely laugh at the absurdity, though, so maybe that will speed things up.

As for a Fifth Wednesday topic, I enjoyed your recent conversation on polytheism, and I would love another post on the subject of gods and goddesses.

Hi JMG,

It is interesting how after all these years even serious thinkers are still largely stuck in the false binary of future apocalypse or future progressive utopia. I recently came across a citation to Arthur Herman’s _The Idea of Decline in Western Civilization_ (2007) in which he argues that predictions of decline are a kind of long-term, contagious intellectual pathology. There are chapters calling out everyone in turn from Rousseau to Spengler to Toynbee for this particular mental illness.

Maybe I have been doing too much thinking in spiritual terms, but it seems as if the PMC wants to bully everyone else to place their faith in credentialed experts–and I mean faith in a technical religious sense.

On the climate issue, I am not hearing activists call for more protests, I am hearing them push hard for Carbon Taxation. If only we can enact a grand reform to tax fossil fuels then we can usher in the shiny future. I think they know that such a scheme is impossible because it will only work if there is a unified global bureaucracy. Even if that were possible, there is not a shred of evidence that any government on earth is capable of efficiently allocating that sort of capital towards ecological sustainability. But it is much more comfortable to rant about the unenlightened who haven’t seen the redeeming light of Carbon Taxation than to actually make sacrifices and changes in their personal lifestyles…

Coincidently, physicist Tom Murphy at Do The Math just published an article about “fusion” that shows in detail the failure of that latest hype about creating more output than input; further concluding that that ‘research’ is acutally directed toward ‘better’ nuclear bombs that doing anything useful.

For the fifth Wednesday post, I would be interested to hear your thoughts on what comes after industrialism–Scarcity Industrialism–and what you think are the most representative examples that have emerged since you wrote the Ecotechnic Future.

JMG, I think you are missing that Fusion is viable…

It is coming with the UFOs… ha ha ha

I think even this last round “UFO” revelations have also fallen flat. People are tuning out all sorts of thinks coming from our “betters” (at least that is my perception).

thx for the essay.

When I read Mr Greer’s remarks about nuclear fusion, I find myself thinking of Clarke’s Law:

“When a distinguished but elderly scientist states that something is possible, he is almost certainly right. When he states that something is impossible, he is very probably wrong”.

And wondering whether it might conceivably apply, mutatis mutandis, to a distinguished and not so elderly Archdruid.

Have you given any thought to writing about what parents and grandparents of female pre-teens might be suggesting as viable careers, lifestyles, and plans for the next 55-75 years of their pre-teen’s lives? It’d be grand if you and your audience can hold forth on that topic.

Fifth Wednesday candidate: the issue I mentioned last week where the managerial class has an incentive to never solve any of the problems they claim to address, as that would put them out of a job.

As I see that Hasan Chowdhury is described as a reporter for Business Insider, I wonder how qualified he is to evaluate scientific breakthroughs. In my limited experience, the number of really expert science reporters is quite small compared to the number being published. The names of Gary Taubes and Hannah Holmes leap to mind; and I once was on friendly terms with Tony Durham. Er…

In my experience, most technologically literate people accept that fusion power is still far in the future, but still seem to think it will become prevalent in that far future, even though they won’t live to see it. That also conveniently sidesteps the issue of the high cost, not because the reactors could ever be scaled down or built with cheaper materials, but because “post-scarcity” that won’t matter.

But if you posit post-scarcity as a prerequisite, why would fusion power be needed at all? Spaceships, presumably, but that goes unstated. So why not wait for post-scarcity to commit presently scarce resources to developing it? “Because we need it to get to post-scarcity.” Contradiction unacknowledged and as far as I can tell, un-perceived. Anyhow, that appears to be one way the research maintains support or at least passive acceptance from many people who are equipped to know better.

All the superconducting blends are non-malleable and non-ductile. More suited to ceramic applications than electrical ones.

John–

For the fifth Wed post, I’d be interested in your view of what group(s) you see as potential successors to the current PMC managerial elite and how you see the transition possibly playing out.

But I’m certain “Dr” Peter Hotez will create a vaxx for parasites with the next $100million dollars …even if the unwashed natives are happy and fine to use practically free ivermectin.

https://williamhunterduncan.substack.com/p/forgiveness

There’s a Japanese manga series called Zom 100: Bucket List of the Dead which begins with a zombie apocalypse. The main character, an office worker toiling under the usual but awful Japanese work conditions, responds by vigorously celebrating that he no longer has no to go to work and, from that point on in the story, is super cheerful despite the situation. A lot of Americans and Japanese are mentally there.

For a fifth Wednesday topic, how about a dime museum tour of future warfare & military strategy. The topic seems apt after the post from two weeks ago.

I would vote for a continuance of the current topic concerning exactly where we are at vs “the narrative”.

I would also like to ask if I’m alone in the feeling that I have no real connection to this country or its culture or institutions? My people have been here 300 plus years and it seems irrelevant. I feel like a stranger here. Is it odd that I couldn’t care less about what happens to it all? Or is this the over-arching point to essays like this? Are folks like me the natural conclusion to this mess? I would very much appreciated any incites you have Mr. Greer…thank you.

So if our current meritocratic overlords go the way of the Spanish Habsburgs. What comes next?

The military generals, CIA spies, mafia, the Illuminats, or dare I say the discordians! Their goddess has just made some announcements in the media lately 🙂

Or can I put in a vote for a new system of merlintocracy. Where a select group of established wise druids under the guidance of spiritual masters elects the current Arthur.

Best regards

Marko

If you want to see a field just as filled with hopium and false promises as fusion, just look in to the long term potential of commercial air travel. I thought I might find a few realistic assessments of when air travel for the masses would have to be wound down in favor of train travel or sailing ships. But no, the future of flying to Disneyworld is bright. No one wants to mention fossil fuels running out, the code word is decarburization. But the answer is the same , Hydrogen Fueled Jetliners.

Just a few small bugs to work out. If Hydrogen is compressed to 10,000 PSI and cooled to near absolute zero then has an energy density about a 3rd of Jet Fuel. In addition, because of the high tech spherical tanks this fuel ( three times the volume needed) will not be able to be stored in the wings but will compete for passenger and cargo space. Then we have to upgrade our airports to handle this high pressure , low temp fuel safely and we are on our way.

I remember back in the late 70’s we had actual conversations about what we could do for long distance transportation when fossil fuels ran out.

Hi JMG and commentariat,

here’s a link to a recent article by Kurt Cobb about drawbacks of fusion (assuming it will ever be possible) that I wasn’t aware of, stemming from the fact that you can’t use just plain old hydrogen for fusion on planet earth since the environment here is so radically different than it is in the sun; so deuterium and tritium are used instead with all kinds of implications:

http://resourceinsights.blogspot.com/2023/06/fusion-its-messier-and-harder-than-you.html

In it he cites Daniel Jassby, a former research physicist at the Princeton Plasma Physics Lab:

“[T]hese neutron streams lead directly to four regrettable problems with nuclear energy [both fission and fusion]: radiation damage to structures; radioactive waste; the need for biological shielding; and the potential for the production of weapons-grade plutonium 239—thus adding to the threat of nuclear weapons proliferation, not lessening it, as fusion proponents would have it.

[I]f fusion reactors are indeed feasible—as assumed here—they would share some of the other serious problems that plague fission reactors, including tritium release, daunting coolant demands, and high operating costs. There will also be additional drawbacks that are unique to fusion devices: the use of a fuel (tritium) that is not found in nature and must be replenished by the reactor itself; and unavoidable on-site power drains that drastically reduce the electric power available for sale.”

As for climate protests, you can make that nearly four decades, and looking at the data no sane person could seriously argue that they have achieved anything (apart from indirectly making some people rich).

greetings

Frank

JMG,

Some political/cultural pundits I’ve reading lately believe that the elites are confident that they easily squelch any revolutionary uprising, given their over-the-top “lawfare” persecution of Trump. It’s as if they’re deliberately goading the plebes into some kind of violent reaction so they can justify an oppressive clamp-down.

This seems to me to be another elite fever dream. For one, I keep thinking about all those American young men, the natural born warrior-types from venerable military families who are *not* enlisting due to the Wokening of our military branches. Push comes to shove, I doubt they’d be sitting on their hands. There are other factors too, eg., food delivery truckers. What do you think?

Thanks.

Another excellent article. It honestly never occurred to me before now that domestic revolutions tend to be limited in their violence, but it certainly matches my memories of the various coups of the last decade, such as Zimbabwe. It makes sense, though: once a government is in terminal failure, it simply isn’t much worth fighting over to either side.

For the fifth Wednesday post, one thought that’s occurred to me from time to time is that future governments will need to incorporate the lessons learned by the current failures of the West. What might a future (post-)American constitution look like that takes those lessons seriously — not some utopian scheme, just a set of amendments with the character of “this will not happen again”? What lessons do you think will be most important and how would you address them?

If that’s perhaps too broad a topic for a single post, perhaps a narrower but related topic: the future of freedom of religion. Once the second religiosity kicks into high gear, it seems likely to me that they’re going to jettison the current, degraded understanding of the concept (which amounts to letting the rubes believe whatever silly things they want as long as it doesn’t inconvenience the ruling elite). So what comes next, and what can we do now to help make a better future for those of us perpetually on the fringes?

Hello JMG! I have a suggestion for the Fifth Wednesday post: I would love to see your post ‘What to Do in the Age of Memory’.

Dear JMG,

A proposal for a blogpost: the magic and sophistry of factions supporting both climate change denial and skepticism.

I ask for this because I am frequently struck by how the layperson is asked to take it on faith that black box climate models are to be believed or disbelieved. And how into this uncertainty and opacity march the vested interests, wielding their bag of magical tricks of persuasion. I noted with curiosity the sigil-like logo used by Extinction Rebellion, using what appears to be a runic symbol at its centre. And on the other side, the term climate change itself seems to have been formulated to mask its man-made origins, what I believe might be called a spell of meaning.

Yours kindly,

Boy

Regarding climate change: among some people, climate change has achieved the odd status of being the only reason anyone might ever consume less than they possibly could. I’ve found that when I try to advocate for LESS, however carefully I try to explain the advantages (such as personal economy, mental and physical health benefits, and reduced dependence on systems one doesn’t control) based on my own experiences, some will construe my “real” agenda as either deludedly “thinking I’m saving the world,” or its binary counterpart, cynically engaging in “pointless virtue-signaling,” both regarding climate change.

Imagine if some people really want LESS and have looked for reasons to justify it (e.g. to their family and friends). “To stop climate change” fails as such a justification. Frustration or cynicism over the whole issue ensues.

Hi JMG – I second leonard anderson #9 on a blog post about C. G. Jung and the occult for the 5th Wednesday post. Thanks.

For the fifth Wednesday, my vote is for a post about the practical aspects of “unity of will”.

There was a question on Monday which related to that:

https://ecosophia.dreamwidth.org/243707.html?thread=42604539#cmt42604539

and I’m wondering if you could expand on the practical questions of intention and unified will. E.g. any tips or strategies on how to decide about the “next” or current intention. And even more importantly: since none of us is living in a vacuum, how to integrate the unified will with all the other things which also have to be done (e.g. job, household), or which are also important (e.g. kids, elderly relatives, pets, a garden to tend to, etc).

I do realize that this is highly personal, and also that it requires a lot of “work” to get to the place where things flow smoothly, as you’ve already pointed out on MM. 😉 But you’ve been grappling with these things for quite a while, and have maybe also talked to other people who have – there must be some lessons or approaches which you could share.

So how about a “Practical Guide to Unified Will and Clear Intentions, for Real-Life People”?

Milkyway

Re 5th Wednesday post – I know this one is really coming out of left field, but wot the heck …. a post on the works of late author Cormac McCarthy who’s been described as a “mystic nihilist”. He’s really almost too bleak for me, but he does touch on “cosmic daemonium” themes that are in a way similar to Lovecraft, even quotes Jacob Boehme in an epigraph in his novel Blood Meridian. And he really is a good writer.

More realistically, a walk-though of Yeats’ A Vision would be great.

Thanks.

So true, At age 16 in a Wisconsin farm house I watched the moon landing on our small black and white TV. We were not rich by any means but we had electric lights, a thermostat controlled oil furnace, color camera, record player,records, access to a library in town, good nearby shopping, 6 day a week mail delivery, a refrigerator, large freezer for our homegrown meat and vegetables, electric stove, electric kitchen mixer am/fm radio, electric vacuum cleaner, two bathrooms with toilet, shower, bath tub, hot and cold running water, electric washer and dryer that worked as well as today’s (washer actually better than today’s “efficient models”).daily delivery of a newspaper, electric dishwasher, electric sewing machine, power tools, weekly delivery of magazines, ability to order things through catalogs, car and truck that drove as fast and easily as cars today, good roads, good rifles and shotguns, access to modern dentristry and medicine, movie theatres, jets that traveled as fast as today’s (though jet travel was not part of our lives) This is 54 years ago, a half century, no real progress since then IMO.

And oh yes, I remember assurances from the 1960’s that fusion power was just 20-30 years away and that study of interstellar radio waves would turn up evidence of aliens.

Dear JMG and group,

Tom Welsh (#37) raises a major point. The knowledge base of the typical journalist to write about most anything is nonexistent. This is probably more to do with a journalism major, but also reflects poor education overall. I frequently give mainstream media interviews and am often frightened at the ignorance of these, usually, young people. I can remember when reporters had decades covering a beat and often practical experiences before taking up journalism. The financial death spiral of the media doesn’t help.

On the topic of people waiting for either apotheosis or Armageddon, I note that the bulk of the Christian calendar is called common or ordinary time. That is when the mundane work is done in patient anticipation.

How about a fifth week post on concepts of time (linear, cyclical) and how that colors our sense of the future.

Patricia M, I’ll take a look when I have some spare time.

Pygmycory, true enough. I hope we can avoid the latter.

Clay, that’s exactly it. Science™, as we should probably call it, has nothing in common with actual scientific practice; it’s a mantra, a mythology, an act of blind faith in the supposed omnipotence of progress, and it’s a pervasive source of bad decisions these days.

Pygmycory, agreed. Lately, especially, the signal to noise ratio in announcements of scientific breakthroughs has dropped very close to zero.

Kurtyigit56, nah, I’ve got a far crueler fate in mind for them: being laughed at, and then ignored, and then forgotten.

DaveOTN, thank you. That’s definitely belly laugh fodder.

Booklover, oh, it makes perfect sense. Two media celebrities who are both darlings of the overprivileged classes — it’s a match made in the most oxygen-deprived of corporate boardrooms.

Will1000, I had something like that in mind, and then David BTL sent me the link to the Chowdhury piece and I started giggling. I’ve tabulated your vote.

Leonard, you’re welcome, and I’ve counted your vote.

Pygmycory, another good point. I expect a lot of really bad fiction churned out by chatbots to flood the corporate publishing field shortly, and cause immense harm.

Epileptic Doomer, I don’t happen to know the current hiring policies in red states, but it wouldn’t surprise me at all if you had a shot. Florida and Texas are having to hire teachers hand over fist because so many people are fleeing from blue states.

Ken, and that’s also an important point, of course. The downsides to perpetual growth are getting very hard to miss these days, unless you’re a privileged media flack. I’ve tablulated your vote.

Siliconguy, at least they’ve got job opportunities growing rice. I’m not sure what our graduates are going to do.

Hakan, thanks for this. My take when it comes to AI is that we don’t have it yet and probably won’t get there — LLMs (large language models) and their visual equivalents can fake intelligence but don’t actually have it, and the more our societies rely on them, the more randomness will be inserted into critical systems and the more quickly those systems will fall apart. As for the rest, that’s a much bigger question than a comment can hold.

Sam, it’s been the central strategy of the American elite since colonial times to keep the working classes fighting each other so they don’t unite against the elites; the current ethnic divisions are just the latest iteration of that strategy. (They got it from the British, who used it for centuries to hold onto their empire — that’s why every former British colony has intractable ethnic conflicts; the British went out of their way to create and foster them.) The thing that fascinates me is that the strategy seems to be faltering now, as witness the rise of right-wing populist rappers like Tom MacDonald and the multiethnic popularity of Oliver Anthony’s “Rich Men North of Richmond — now up to 15 million views on YouTube. We may be moving into a new game.

Russell, that’s another great example — and the attitude you describe, “We want a new battery technology and therefore the universe is obliged to give us one,” is a classic case of how Science™ makes people stupid.

Fallingleaves, trust Tom to do the job right. Many thanks for this.

Asdf, nah, it’s a good turn of phrase. Some folklore consists of beliefs that once made sense, but lost all their genuine meaning a long time ago.

Bergente, but of course! Without an endless series of ever-greater breakthroughs, why, people would have to live with the consequences of their actions — and we can’t have that, of course. The decline in actual breakthroughs is one more piece of evidence that the law of diminishing returns applies to scientific research as a whole.

Luke, I’ve added it to the list.

Curt, how have I lived all these years and not heard of this? Thank you! I wonder if I can get a handcrafted manual encabulator… 😉

Greg, there’s a reason why Retrotopia, with its imagery of deliberate return to a pre-1960s technology, is my most popular novel.

Justin, thanks for this.

Nick, there’s an entire movement these days of ateliers and small art schools outside the academic industry — check out the website for Grand Central Atelier as an example — and the music of Alma Deutscher and other neoclassical composers suggests that a similar groundswell is under way in music. (So, in a different genre, does the runaway popularity of Oliver Anthony.) Movies will come later, though the unexpected success of Sound of Freedom shows that things may be moving in that direction already.

Quin, thanks for this as always.

Jeanne, and that’s exactly it — all those grand promises and threats and images of utopia and doom, turned out to be wasted breath. That’s really sinking in now.

Jeff, my father used to quote a simple definition of “expert”: X is the unknown, a spurt is a drip under pressure, and so an expert is an unknown drip under pressure. You have to admit, he was right…

Roldy, that’s the point where scientific research stopped being something that individuals could do on their own time and became prohibitively expensive and complex. From that point the bar has risen even further — to the point that many of the things that might theoretically be possible can’t be done with the resources the whole planet can spare.

Samuel, the world moves at its own speed, which is much slower than most people think. I’ve added your vote to the list.

Samurai_47, I bet that if carbon taxation actually gets put in place, they’ll scream like gutshot banshees when they have to pay! I may have to find a copy of Herman’s book. It sounds highly amusing.

Bruce, yep. As usual, Tom’s the guy to follow when it comes to crunching the numbers.

Jerry D, of course! The UFOs will bring us working fusion reactors, flying cars, and unicorns barfing rainbows. Oh, and an honest politician or two — or is that too much to hope for? 😉

Tom, that would be funny if it wasn’t so sad. For more than 75 years now, the paid flacks of big science have been insisting that commercial fusion power was only 20 years in the future. At what point will you finally admit that you’ve been conned? As for Clarke’s “laws,” I really do need to do a post about them, don’t I? Clarke was wrong — not just a little wrong, but utterly, catastrophically wrong — on all three counts.

Larry and Roldy, I’ve added both of those to the list.

Tom, but of course! It’s always the people who don’t know much about science who are convinced that it’s omnipotent. The problem, of course, is that politicians all come from that category.

Have to point out that you are wrong in one respect. I was recently informed by my good friend (bless her technophile soul) who follows such news quite closely, that, after the latest breathtakingly exciting (and horrifically expensive) advance in technology, the Quest for Fusion Power has officially been shortened to just 15 years in the future.

Therefore, by the time Las Vegas looks like it will in the image, success will only be 3 or 4 years in the future.

Apparently the timeline of the futurology is on a logarithmic scale.

JMG,

Fifth Wednesday candidate: please count me on another post on scarcity industrialism. And maybe dealing with the akward fact that things are working less and less reliably in general.

I’ve noticed it quite recently in Poland in Internet access to my bank; shelves in local markets have empty patches as normal feature and it appear that it won’t changeand the things simply sliding down is just a fact. Most people around me still refuse to come to terms with the permanence of downward trajectory…

Walt, the whole “post-scarcity” business is perhaps the most clinically insane aspect of current fantasies of progress. The entire body of physical law stands in the way of that delusion, and yet people who ought to know better cling to it.

Wqjcv, granted.

David BTL, duly tabulated.

William, yeah, I probably should have included that with the UFOs and the barfing unicorns.

Dennis, ha! I’m not at all surprised — and of course you’re quite right.

Justin, duly tabulated.

Thomas, it’s not at all surprising. In failing societies, elite groups hijack the culture and institutions to serve their momentary advantage, and never realize that this means everyone outside the elite will end up treating the culture and institutions as disposable. As for the end of the arc, I’ll talk about that in future posts.

Re: Carbon taxes, Washington State has one now, my last fill up was $5.02 per gallon. It’s clearly a “crush the rural population” tax.

As for science reporters, I let my subscription to Scientific American run out because the magazine kept getting thinner, more and more articles were written by science reporters and not the people doing the work, and they got very woke. After decades of insisting that there was no such thing as race, they decided race did exist and it was the most important thing of all. And in their long list of newly discovered races, all but one deserved capital letters as proper nouns. And they were proud of their newly racism too.

For a fifth Wednesday topic, I’ll second JPM’s vote for ” …a dime museum tour of future warfare & military strategy”.

This topic has been a candidate a couple of times before but I would request adding warfare on the astral plane and how it would effect material plane warfare.

Example: we sure enough have a weaponized internet now that is used by many to “cause changes in consciousness in accordance with (ill) will” wonder how that will work out in a future resource constrained environment?

Correction to previous comment:

A proposal for a blogpost: the magic and sophistry used by factions *supporting amd denying* climate change.

(Getting late this side of the Atlantic).

Hi JMG,

very long time lurker here.

I used to admire your clarity of thought, and looked forward to your essays, that always made me think I understood the world a little bit more.

In the last few years though, I increasingly felt that you started to use your considerable intellect and eloquence to shoehorn reality into a narrative, rather than to shed light on reality.

Covid and Ukraine come to my mind, but let’s keep the focus on the current topic. You say that the last 30 years of climate change activism did nothing. The same article you linked however features graphs showing that emissions in EU and US have slowly decreased in that period. It’s true that global emissions are increasing, but that is due to the contribution of developing countries, where climate activism – if any is present – is bound to be very recent. This clearly does not prove that activism is actually working; but the fact that you do not even mention it, is an example of why I feel you have joined the vast majority, and started picking the facts that conform to a specific narrative.

Anyway, I guess I’m just grieving the old Archdruid.

PS

Since you are asking for next Wednesday’s topic… I symphathize with your distrust for celebrities and politicians, but I do not understand what you think the best course of action would be. Ignore the problem, burn all the fossil fuels left, and cope with the outcome?

kurtyigit56 @ 5, Are you willing to do just that in your own country, Turkey, I believe?

Epileptic Doomer @ 11, Have you considered private teaching? Maybe try to connect with homeschoolers, Church groups, and so on?

Samuel about slow descent, etc., I am going to venture on a categorical statement: Anyone in the USA who thinks they will get to be in charge when TSTF, won’t be. I am betting on American’s democratic and self-reliant roots running deep. I am beginning to see, across the country, at local level, capable and honest people stepping up to do what they can. Finally.

@ JMG – I’m a bit confused. On the one hand, you write that anthropogenic climate change is a real predicament, slowly undermining industrial civilization, and will be a prime cause of the decline and fall of said civilization. But then you write that the polar bear populations may be okay for now, and the Arctic isn’t ice free, for now, so the climate scientists don’t know what they’re talking about. Yet I recall in the past you’ve noted that a number of well-published benchmarks dealing the climate have been hit decades before studies suggested they would. Which is it?

Also, my vote for next week’s topic is for warfare in the de-industrial future.

@ Samuel #29 – I know that feeling. I think the dam is cracking and when it does burst, the best anyone can hope for is to have positioned themselves far enough up the metaphorical slopes to not get dragged into the floodwaters by everyone who didn’t. I know that sounds kinda callous, but what can one do, when none of us have an iota of influence over the course of broader events?

My vote is for the next financial crisis causing the rupture that finally breaks the dam. A trillion dollars in commercial real estate loans come due in the next year, and a lot of the real estate is vacant thanks to the remote work revolution. The dam may already be breaking with the spike in global wheat prices thanks to the Ukraine War. A lot of people in a lot of poor countries can’t afford to eat right now, and the last time that happened, we got the Arab Spring. What do you think?

I also vote for a post on scarcity industrialism

For your fifth Wednesday post, I would love for you to respond to Sebastian Morello’s essays on hermeticism, in whatever way seems most interesting and relevant to you.

https://europeanconservative.com/articles/essay/can-hermetic-magic-rescue-the-church-part-iii-the-magi-return/

Hello everybody. I cannot belive that somebody is still beliving in this fusion thing. Here we have real problems like crumbling bridges and rapidly downsizing economy. That factory were I used to work was closed down had to look for work elswhere and now I am worst off and a lot off people had enough hearing about jetting to the moon etc. JMG the Polish goverment is throwing a hissy fit because it was lost acess to Niger’s uranium along with France and now people are screaming about invading Niger to “restore democracy” but we gave everything to Ukraine so it might be a problem for both nations….

BTW the polish nuclear eactor is always drumroll… 15 years in the future…

My grandmother when she was 45 went with some colleagues to Żeroń on a trip in 1982 where the locals proudly announced that they are staying at the site of a gigawatt nuclear reactor, 40 years later nothing is build even now the goverment can’t decide which offer to choose or what corporation is going to do it…

My apologies if this is off topic or a repeat and delete as necessary, but from an American view I use a framework of the republic of Washington, the republic of Lincoln and the republic of FDR.

Which does mean that we are due for another institutional change due to demographics and technology changes (if not as big of a geographic change as the earlier ones). The failure of the current institutions and their claims of expertise is in line with this.

There will not be a republic of Trump, but I can see Trump being either a Wilson or a Hoover to a future FDR.

I also vote for your view on karma.

Thanks, Drew C

About 15 or so years ago, I would read physorg.com daily. The headlines would say something absurd like, “Graphene will create hurricane resistant homes.” Then, scrolling down, you’d eventually get to the part where they did a stress test on a small fiber of graphene, and extrapolated that minuscule data point to conclude that soon, all homes in Florida could resist a Category 5 hurricane. At this point, you’d always see words like “speculate” or “predict”, probably by the time everyone has stopped reading the article.

I eventually learned that those articles on physorg were developed by the marketing… er, press office of universities to get more funding.

Wonder if this corresponds in any way with re-enchantment!

https://tvtropes.org/pmwiki/pmwiki.php/ReedRichardsIsUseless/MarvelUniverse

The slogan ‘Reed Richards is Useless’ was coined by comic book fans to highlight the way that the great inventors in comic books never improve everyday life with their science. The comic book answer is that the technology exists but is being withheld by the government, the bad guys or the good guys until humanity proves itself worthy.

On the question of AI. I decided recently to check out the hype and had a “chat” with Bing AI about covid jabs. We first agreed that long term safety data was essential to understanding the risks and benefits of jabs. We then agreed that there was no long term safety data for covid jabs. But the AI would not agree that the long term benefits and risks of covid jabs were therefore unknowable, it repeatedly assured me they were safe as the WHO says so. I told it there was a hole in its logic. It then told me it uses large language models and algorithms and is not capable of human reasoning.

It is a very useful new search engine, like google was in the early days. But is not in any meaningful way intelligent, it will even tell you this if you ask the right questions. It is however smart enough to fool what passes for journalists these days, but that is setting the bar very low indeed.

Greetings, JMG and all.

Re: Fifth Wednesday

First, though, I want to thank you, JMG, for your writing, teaching, and work in general — I am a quiet person unlikely to pop my head over the parapet often, so it would be remiss in the extreme for me not to take this possibly very rare opportunity! I’ve learned a great deal from you and always find your thoughts well worth listening to, even when I occasionally disagree with you (sometimes I continue to disagree, sometimes I decide that you were right!); I also recommend reading you to others.

I would be very interested in any thoughts or observations you might have about any instances (particularly recently) where your expectations have been violated — those “wait, what????” moments (or those that others might be reporting to you of their own). I’ll admit that I like these in general, but I think they’re only increasingly useful (well, at least to me) as things keep seething and roiling and changing all the more and more and more. For me, it’s generally not a case of “oh, I thought X but now I see it is Y” but rather “oh, I thought X but…uh…well, not X, I guess!” and onwards down a new path.